Cross-Entropy Loss: Unraveling its Role in Machine Learning

Cross Entropy loss is used for training classification models.

Read the entire series

- Cross-Entropy Loss: Unraveling its Role in Machine Learning

- Batch vs. Layer Normalization - Unlocking Efficiency in Neural Networks

- Empowering AI and Machine Learning with Vector Databases

- Langchain Tools: Revolutionizing AI Development with Advanced Toolsets

- Vector Databases: Redefining the Future of Search Technology

- Local Sensitivity Hashing (L.S.H.): A Comprehensive Guide

- Optimizing AI: A Guide to Stable Diffusion and Efficient Caching Strategies

- Nemo Guardrails: Elevating AI Safety and Reliability

- Data Modeling Techniques Optimized for Vector Databases

- Demystifying Color Histograms: A Guide to Image Processing and Analysis

- Exploring BGE-M3: The Future of Information Retrieval with Milvus

- Mastering BM25: A Deep Dive into the Algorithm and Its Application in Milvus

- TF-IDF - Understanding Term Frequency-Inverse Document Frequency in NLP

- Understanding Regularization in Neural Networks

- A Beginner's Guide to Understanding Vision Transformers (ViT)

- Understanding DETR: End-to-end Object Detection with Transformers

- Vector Database vs Graph Database

- What is Computer Vision?

- Deep Residual Learning for Image Recognition

- Decoding Transformer Models: A Study of Their Architecture and Underlying Principles

- What is Object Detection? A Comprehensive Guide

- The Evolution of Multi-Agent Systems: From Early Neural Networks to Modern Distributed Learning (Algorithmic)

- The Evolution of Multi-Agent Systems: From Early Neural Networks to Modern Distributed Learning (Methodological)

- Understanding CoCa: Advancing Image-Text Foundation Models with Contrastive Captioners

- Florence: An Advanced Foundation Model for Computer Vision by Microsoft

- The Potential Transformer Replacement: Mamba

- ALIGN Explained: Scaling Up Visual and Vision-Language Representation Learning With Noisy Text Supervision

What is Entropy loss?

A loss function is a mathematical method for measuring the accuracy of a machine learning model by quantifying the difference between actual and predicted and true values. The less the difference, the better the machine learning model. Thus, loss functions work as a metric to evaluate a model’s performance and guide improvement.

Cross-Entropy loss, or log loss, is often used for training classification models. It’s an easy-to-implement and optimized loss function that requires labels encoded in numeric values for accurate loss calculation. This article will discuss the functioning and implementation of Cross-Entropy loss, its applications, challenges, and tips for better performance.

Understanding Cross-Entropy Loss

The Cross-Entropy loss compares the probability distribution of predicted values to actual values. Penalization is an essential aspect of the Cross-Entropy loss function, which is the core of minimizing the loss in a machine learning model.

Two main classification problems:

Binary classification: Classification tasks with only two labels for the output variable.

Multi-class classification: Classification tasks with more than two labels.

Assume that for binary classification, class labels are converted to the values 0 or 1. In a multi-class scenario, assume labels are one-hot encoded. For example, for a classification problem with three classes, if a data point belongs to the first class, its label will be represented as [1, 0, 0]. Similarly, the label will be [0, 1, 0] if it belongs to the second class.

The formula of Cross-Entropy for binary classification is:

Where:

y = Actual label

p = Predicted probability for the positive class

The formula of Cross-Entropy for multi-class classification is:

Where:

Σ = Sum of all N classes

y_i = True label of class i

p_i = Predicted probability of class i

Cross-Entropy uses logarithms to calculate penalties. The function imposes a heavier penalty on a prediction if it deviates significantly from the actual value. When the deviation is smaller, the penalty is also reduced.

For instance, consider a model predicting a candidate's gender. If it assigns a probability of 0.9 to male when the actual value is 1, the penalty for this prediction would be 0.105, as calculated by—ln (0.9) = 0.105. Conversely, if the model assigns a probability of 0.1 to males, the penalty increases to 2.30.

Computing Cross-Entropy Loss

The steps involved in calculating the Cross-Entropy loss function typically include:

Inference time: After training, the model makes predictions in the form of probabilities for each class.

Loss per data point: The Cross-Entropy loss function compares the predicted probabilities against true labels. The loss value tells us how “off” the model’s guess was for that particular data point.

Overall loss: But we don’t just care about one data point! To understand how well the model is performing, we calculate the average Cross-Entropy loss across all the data points, usually in a validation or test data set. This is the model’s overall loss. Minimizing the loss: Optimization algorithms are used to minimize the overall loss by adjusting model parameters based on the penalties.

Cross-Entropy Loss in Python

Run the following code snippet in your Python environment to compute Cross-Entropy loss. Y_true refers to actual class labels encoded in numeric values, and y_pred is the predicted values:

# !pip install scikit-learn

# import log_loss from sklearn

from sklearn.metrics import log_loss

# calculate the loss by providing actual and predicted values to the log_loss function

loss = log_loss(y_true, y_pred)

Applications in Machine Learning

The Cross-Entropy loss function is used in various classification models, including:

Logistic Regression

Logistic regression is commonly used for binary classification problems. It predicts the probability of each class between 0 and 1. Cross-Entropy is applied to the predicted probabilities to gauge the model's performance.

For example, in the following snippet, we create a dummy y_true and y_pred using numPy and calculate Cross-Entropy on it:

from sklearn.metrics import log_loss

import numpy as np

# Sample data

y_true = np.array([0, 1]) # True class labels

y_pred = np.array([[0.9, 0.1], # Predicted probabilities for data point 0

[0.5, 0.5],])# Predicted probabilities for data point 1

loss = log_loss(y_true, y_pred)

print(f"Cross Entropy Loss (using log_loss): {loss}")

Deep Neural Networks

Imagine you're training a deep neural network (DNN) to recognize handwritten digits (0-9). Here's how cross-entropy loss works with backpropagation:

Prediction Time: After training, the DNN takes an image of a digit and predicts the probability of it being each number (10 probabilities, one for each digit). Unlike the simpler classification models we just discussed, probabilities are given smoothly here. Think of it like a spectrum - the higher the probability for a digit, the more confident the model is that the image represents that number.

Loss per Image: The cross-entropy loss function compares these predicted probabilities with the actual digit label (e.g., 0.9 probability for 9 and 0.1 for all others). This tells us how "wrong" the model’s guess was for that image.

Backpropagation: A single image doesn't tell the whole story. Here's where it gets powerful: Backpropagation takes this continuous loss and uses a special property called differentiability to calculate how much each tiny adjustment within the DNN (weights and biases) contributed to that error.

Learning from Mistakes: By understanding these contributions, the optimization algorithm can adjust each individual weight in the DNN to minimize the overall loss across all training images.

This approach teaches a deep neural network to alter its weights based on assigned penalties in order to generate reliable predictions.

Challenges and Tips

When using Cross Entropy loss as a loss function, several disadvantages must be considered. Taking safety measures, as discussed below, will ensure an effective loss computation.

1. Sensitive to Outliers

Cross-Entropy loss is heavily influenced by outliers, which can result in model overfitting. Since Cross-Entropy loss compares probabilities, extreme values can skew the calculations. This leads the model to ignore the underlying data and prioritize fitting the outliers.

Tips to Handle Outlier Sensitivity

Removing outliers during the data preprocessing stage in data cleaning helps to avoid overfitting. However, be careful not to remove legitimate data points. Robust loss functions less sensitive to outliers, such as Huber loss, can be used alternatively to prevent overfitting.

2. Class Imbalance

Class imbalance occurs when one class has significantly more data points than the other. Cross-entropy loss finds it difficult to learn minority classes since the majority class has far more data points. Correctly predicting the majority class is the easiest (lazy) way to minimize the overall loss.

Tips to Handle Class Imbalance

Oversampling of the minority class or undersampling of the majority class creates a balanced dataset. Regularization techniques like L1 and L2 regularization might help prevent the model from overfitting. Furthermore, adjusting model hyperparameters like learning rate or class weights might guide the model to pay more attention to specific classes.

Cross Entropy Loss Glossary items

Backpropagation is an algorithm used in training artificial neural networks. It calculates the gradient of the loss function concerning each weight by iterating backward through the network. An optimization algorithm then uses this information to adjust the weights, minimizing the loss function and improving the model's performance. Backpropagation is crucial for efficiently computing how each weight in a neural network contributes to the overall error.

One-hot encoding is a technique used to represent categorical variables as binary vectors. In this representation, each category is mapped to a binary vector of length n (where n is the number of categories), with a 1 in the position corresponding to the category and 0s elsewhere. For example, in a three-class problem, the categories might be represented as [1,0,0], [0,1,0], and [0,0,1].

Regularization is a technique used to prevent overfitting by adding a penalty term to the loss function. This penalty discourages the model from learning overly complex patterns that may not generalize to new data. Common forms include L1 (Lasso) and L2 (Ridge) regularization, which add terms based on the absolute or squared values of model parameters, respectively. Regularization helps to create models that perform well not just on training data but also on unseen data.

Conclusion

Cross-Entropy loss is an essential yet easy-to-use loss function for classification models. It guides optimization algorithms to adjust model weights and achieve better performance. Binary Cross-Entropy and Multi-class Cross-Entropy are the two types of Cross-Entropy functions. Both use logarithms to assign penalties to incorrect model predictions.

Cross-Entropy is a powerful tool many tech giants use in various applications. The key to mastering any skill lies in practice. Use tools like TensorFlow Playground to visualize its impact on neural networks. Explore further resources and hands-on experimentation for a deeper understanding of Cross-Entropy loss.

- What is Entropy loss?

- Understanding Cross-Entropy Loss

- Computing Cross-Entropy Loss

- Applications in Machine Learning

- Challenges and Tips

- Cross Entropy Loss Glossary items

- Conclusion

Content

Start Free, Scale Easily

Try the fully-managed vector database built for your GenAI applications.

Try Zilliz Cloud for FreeKeep Reading

Nemo Guardrails: Elevating AI Safety and Reliability

In this article, we will provide an in-depth explanation of what Nemo Guardrails are, its practical applications, along with its integration.

Data Modeling Techniques Optimized for Vector Databases

This post explores various data modeling techniques for optimizing the performance of vector databases.

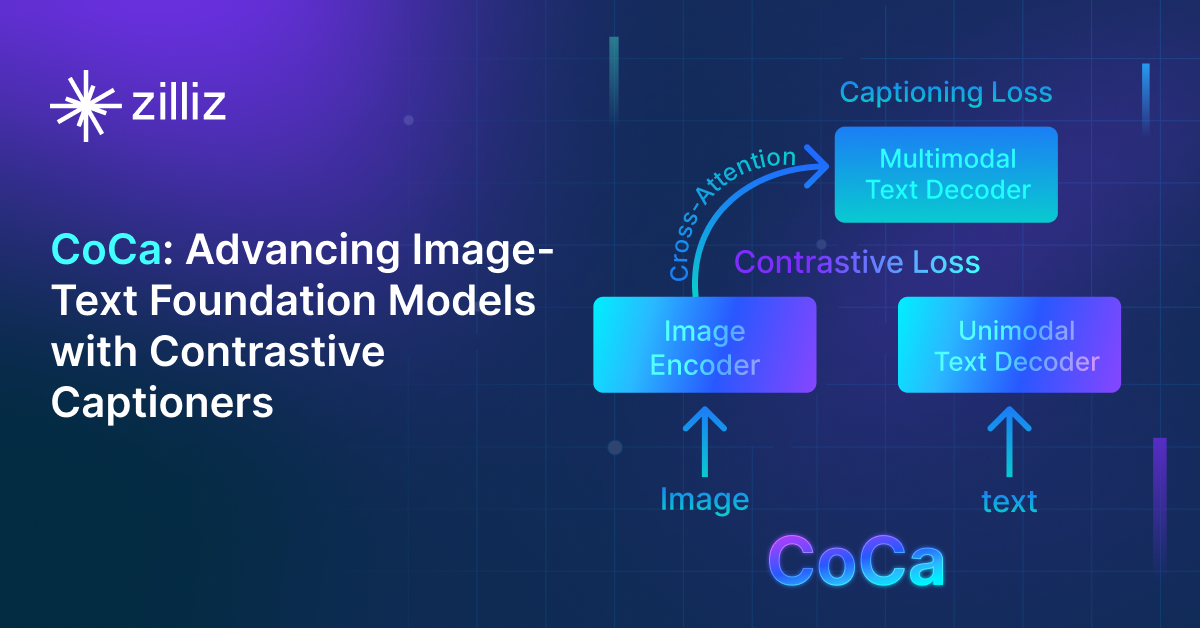

Understanding CoCa: Advancing Image-Text Foundation Models with Contrastive Captioners

Contrastive Captioners (CoCa) is an AI model developed by Microsoft that is designed to bridge the capabilities of language models and vision models.