Build RAG Chatbot with LangChain, LangChain vector store, Mistral AI Mistral Small, and IBM all-minilm-l6-v2

Introduction to RAG

Retrieval-Augmented Generation (RAG) is a game-changer for GenAI applications, especially in conversational AI. It combines the power of pre-trained large language models (LLMs) like OpenAI’s GPT with external knowledge sources stored in vector databases such as Milvus and Zilliz Cloud, allowing for more accurate, contextually relevant, and up-to-date response generation. A RAG pipeline usually consists of four basic components: a vector database, an embedding model, an LLM, and a framework.

Key Components We'll Use for This RAG Chatbot

This tutorial shows you how to build a simple RAG chatbot in Python using the following components:

- LangChain: An open-source framework that helps you orchestrate the interaction between LLMs, vector stores, embedding models, etc, making it easier to integrate a RAG pipeline.

- LangChain in-memory vector store: an in-memory, ephemeral vector store that stores embeddings in-memory and does an exact, linear search for the most similar embeddings. The default similarity metric is cosine similarity, but can be changed to any of the similarity metrics supported by ml-distance. It is intended for demos and does not yet support ids or deletion. (If you want a much more scalable solution for your apps or even enterprise projects, we recommend using Zilliz Cloud, which is a fully managed vector database service built on the open-source Milvusand offers a free tier supporting up to 1 million vectors.)

- Mistral AI Mistral Small: This lightweight transformer model offers competitive performance with a reduced memory footprint, making it suitable for resource-constrained environments. It excels in tasks like text generation and classification, providing efficiency without sacrificing quality. Ideal for applications needing quick responses and low latency, such as chatbots and real-time analytics.

- IBM all-minilm-l6-v2: This model is a compact, efficient transformer-based language representation model optimized for tasks requiring fast inferencing. It excels in natural language understanding tasks such as sentiment analysis and information retrieval, making it ideal for applications in chatbots, search engines, and data annotation.

By the end of this tutorial, you’ll have a functional chatbot capable of answering questions based on a custom knowledge base.

Note: Since we may use proprietary models in our tutorials, make sure you have the required API key beforehand.

Step 1: Install and Set Up LangChain

%pip install --quiet --upgrade langchain-text-splitters langchain-community langgraph

Step 2: Install and Set Up Mistral AI Mistral Small

pip install -qU "langchain[mistralai]"

import getpass

import os

if not os.environ.get("MISTRAL_API_KEY"):

os.environ["MISTRAL_API_KEY"] = getpass.getpass("Enter API key for Mistral AI: ")

from langchain.chat_models import init_chat_model

llm = init_chat_model("mistral-small-latest", model_provider="mistralai")

Step 3: Install and Set Up IBM all-minilm-l6-v2

pip install -qU langchain-ibm

import getpass

import os

if not os.environ.get("WATSONX_APIKEY"):

os.environ["WATSONX_APIKEY"] = getpass.getpass("Enter API key for IBM watsonx: ")

from langchain_ibm import WatsonxEmbeddings

embeddings = WatsonxEmbeddings(

model_id="sentence-transformers/all-minilm-l6-v2",

url="https://us-south.ml.cloud.ibm.com",

project_id="<WATSONX PROJECT_ID>",

)

Step 4: Install and Set Up LangChain vector store

pip install -qU langchain-core

from langchain_core.vectorstores import InMemoryVectorStore

vector_store = InMemoryVectorStore(embeddings)

Step 5: Build a RAG Chatbot

Now that you’ve set up all components, let’s start to build a simple chatbot. We’ll use the Milvus introduction doc as a private knowledge base. You can replace it with your own dataset to customize your RAG chatbot.

import bs4

from langchain import hub

from langchain_community.document_loaders import WebBaseLoader

from langchain_core.documents import Document

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langgraph.graph import START, StateGraph

from typing_extensions import List, TypedDict

# Load and chunk contents of the blog

loader = WebBaseLoader(

web_paths=("https://milvus.io/docs/overview.md",),

bs_kwargs=dict(

parse_only=bs4.SoupStrainer(

class_=("doc-style doc-post-content")

)

),

)

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

all_splits = text_splitter.split_documents(docs)

# Index chunks

_ = vector_store.add_documents(documents=all_splits)

# Define prompt for question-answering

prompt = hub.pull("rlm/rag-prompt")

# Define state for application

class State(TypedDict):

question: str

context: List[Document]

answer: str

# Define application steps

def retrieve(state: State):

retrieved_docs = vector_store.similarity_search(state["question"])

return {"context": retrieved_docs}

def generate(state: State):

docs_content = "\n\n".join(doc.page_content for doc in state["context"])

messages = prompt.invoke({"question": state["question"], "context": docs_content})

response = llm.invoke(messages)

return {"answer": response.content}

# Compile application and test

graph_builder = StateGraph(State).add_sequence([retrieve, generate])

graph_builder.add_edge(START, "retrieve")

graph = graph_builder.compile()

Test the Chatbot

Yeah! You've built your own chatbot. Let's ask the chatbot a question.

response = graph.invoke({"question": "What data types does Milvus support?"})

print(response["answer"])

Example Output

Milvus supports various data types including sparse vectors, binary vectors, JSON, and arrays. Additionally, it handles common numerical and character types, making it versatile for different data modeling needs. This allows users to manage unstructured or multi-modal data efficiently.

Optimization Tips

As you build your RAG system, optimization is key to ensuring peak performance and efficiency. While setting up the components is an essential first step, fine-tuning each one will help you create a solution that works even better and scales seamlessly. In this section, we’ll share some practical tips for optimizing all these components, giving you the edge to build smarter, faster, and more responsive RAG applications.

LangChain optimization tips

To optimize LangChain, focus on minimizing redundant operations in your workflow by structuring your chains and agents efficiently. Use caching to avoid repeated computations, speeding up your system, and experiment with modular design to ensure that components like models or databases can be easily swapped out. This will provide both flexibility and efficiency, allowing you to quickly scale your system without unnecessary delays or complications.

LangChain in-memory vector store optimization tips

LangChain in-memory vector store is just an ephemeral vector store that stores embeddings in-memory and does an exact, linear search for the most similar embeddings. It has very limited features and is only intended for demos. If you plan to build a functional or even production-level solution, we recommend using Zilliz Cloud, which is a fully managed vector database service built on the open-source Milvus and offers a free tier supporting up to 1 million vectors.)

Mistral AI Mistral Small optimization tips

Mistral Small is a compact, efficient model best suited for low-latency and cost-effective RAG applications. Optimize token usage by ensuring retrieval pipelines return highly targeted and concise context, reducing unnecessary model computation. Use lightweight prompt compression techniques to streamline input formatting and avoid redundant details. Adjust temperature to 0.1–0.2 for factual consistency while keeping sampling techniques minimal to prevent response variability. For real-time applications, implement caching of common queries to further improve speed. If deploying at scale, leverage quantized versions of the model (e.g., 4-bit or 8-bit precision) to reduce memory footprint. Use batch inference techniques to maximize throughput while minimizing API call overhead.

IBM all-minilm-l6-v2 optimization tips

To optimize the performance of IBM all-minilm-l6-v2 in a Retrieval-Augmented Generation (RAG) setup, consider implementing streamlined query preprocessing to remove stop words and normalize text, ensuring that input queries are concise and relevant. Layering caching strategies on frequently retrieved results can significantly reduce latency, while fine-tuning the model with domain-specific data enhances relevance and accuracy. Additionally, experiment with batch processing during inference to leverage parallelization, and monitor and adjust hyperparameters like learning rates and maximum token counts to refine model responses. Lastly, ensure that your retrieval system is seamlessly integrated with the generation process to maintain context and coherence in generated outputs.

By implementing these tips across your components, you'll be able to enhance the performance and functionality of your RAG system, ensuring it’s optimized for both speed and accuracy. Keep testing, iterating, and refining your setup to stay ahead in the ever-evolving world of AI development.

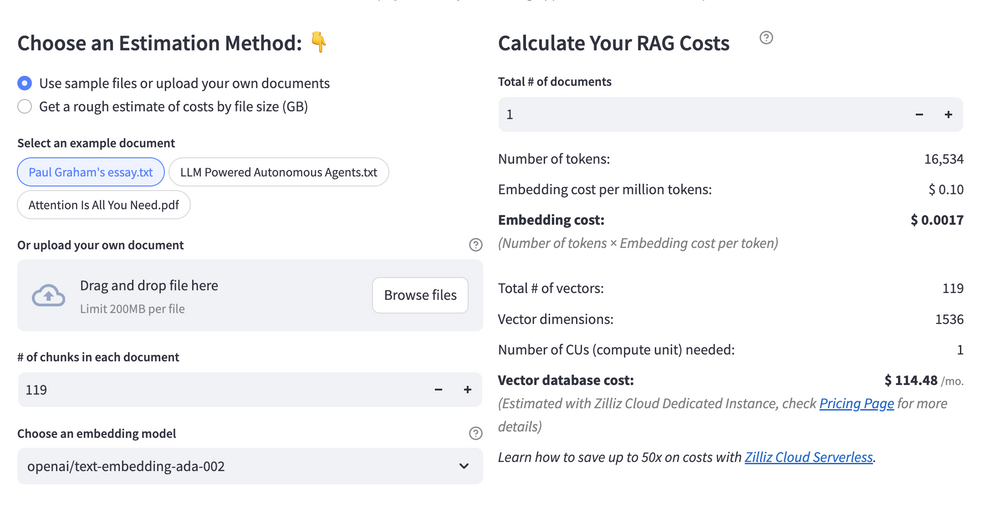

RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

Estimating the cost of a Retrieval-Augmented Generation (RAG) pipeline involves analyzing expenses across vector storage, compute resources, and API usage. Key cost drivers include vector database queries, embedding generation, and LLM inference.

RAG Cost Calculator is a free tool that quickly estimates the cost of building a RAG pipeline, including chunking, embedding, vector storage/search, and LLM generation. It also helps you identify cost-saving opportunities and achieve up to 10x cost reduction on vector databases with the serverless option.

Calculate your RAG cost

Calculate your RAG cost

What Have You Learned?

By diving into this tutorial, you’ve unlocked the magic of building a RAG system from the ground up using powerful tools! You learned how LangChain acts as the glue that seamlessly connects your pipeline, orchestrating the flow of data between components. The LangChain vector store stepped in as your intelligent memory bank, storing and retrieving contextual information with precision, while IBM’s all-minilm-l6-v2 embedding model transformed raw text into rich numerical representations, making sense of unstructured data. Mistral AI’s Mistral Small then took center stage, leveraging those embeddings to generate human-like, context-aware responses that feel natural and relevant. Together, these pieces form a dynamic RAG pipeline that breathes life into applications—imagine chatbots that answer questions with depth, search engines that understand nuance, or tools that summarize complex documents in seconds. Plus, you picked up pro tips for optimizing performance, like tuning chunk sizes for embeddings and balancing speed with accuracy, and even discovered tools like the free RAG cost calculator to estimate expenses before scaling up.

But this isn’t just about following steps—it’s about empowerment. You now have the blueprint to create systems that think smarter, adapt faster, and solve real-world problems. Whether you’re enhancing customer support, building personalized learning platforms, or experimenting with AI-driven analytics, your toolkit is ready. The future of intelligent applications is wide open, and you’re equipped to shape it. So fire up your code editor, tweak those parameters, and let your creativity run wild. Every line of code you write brings us closer to a world where technology understands us better. Start building, keep iterating, and who knows—your next project might just redefine what’s possible. The adventure is yours to own—go make it epic! 🚀

Further Resources

🌟 In addition to this RAG tutorial, unleash your full potential with these incredible resources to level up your RAG skills.

- How to Build a Multimodal RAG | Documentation

- How to Enhance the Performance of Your RAG Pipeline

- Graph RAG with Milvus | Documentation

- How to Evaluate RAG Applications - Zilliz Learn

- Generative AI Resource Hub | Zilliz

We'd Love to Hear What You Think!

We’d love to hear your thoughts! 🌟 Leave your questions or comments below or join our vibrant Milvus Discord community to share your experiences, ask questions, or connect with thousands of AI enthusiasts. Your journey matters to us!

If you like this tutorial, show your support by giving our Milvus GitHub repo a star ⭐—it means the world to us and inspires us to keep creating! 💖

- Introduction to RAG

- Key Components We'll Use for This RAG Chatbot

- Step 1: Install and Set Up LangChain

- Step 2: Install and Set Up Mistral AI Mistral Small

- Step 3: Install and Set Up IBM all-minilm-l6-v2

- Step 4: Install and Set Up LangChain vector store

- Step 5: Build a RAG Chatbot

- Optimization Tips

- RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

- What Have You Learned?

- Further Resources

- We'd Love to Hear What You Think!

anchor.title

Vector Database at Scale

Zilliz Cloud is a fully-managed vector database built for scale, perfect for your RAG apps.

Try Zilliz Cloud for Free