Build RAG Chatbot with Haystack, Haystack In-memory store, Google Vertex AI Gemini 2.0 Flash Thinking, and AmazonBedrock cohere embed-english-v3

Introduction to RAG

Retrieval-Augmented Generation (RAG) is a game-changer for GenAI applications, especially in conversational AI. It combines the power of pre-trained large language models (LLMs) like OpenAI’s GPT with external knowledge sources stored in vector databases such as Milvus and Zilliz Cloud, allowing for more accurate, contextually relevant, and up-to-date response generation. A RAG pipeline usually consists of four basic components: a vector database, an embedding model, an LLM, and a framework.

Key Components We'll Use for This RAG Chatbot

This tutorial shows you how to build a simple RAG chatbot in Python using the following components:

- Haystack: An open-source Python framework designed for building production-ready NLP applications, particularly question answering and semantic search systems. Haystack excels at retrieving information from large document collections through its modular architecture that combines retrieval and reader components. Ideal for developers creating search applications, chatbots, and knowledge management systems that require efficient document processing and accurate information extraction from unstructured text.

- Haystack in-memory store: a very simple, in-memory document store with no extra services or dependencies. It is great for experimenting with Haystack, and we do not recommend using it for production. If you want a much more scalable solution for your apps or even enterprise projects, we recommend using Zilliz Cloud, which is a fully managed vector database service built on the open-source Milvusand offers a free tier supporting up to 1 million vectors.)

- Google Vertex AI Gemini 2.0 Flash: A lightweight, high-speed AI model optimized for rapid inference and scalable tasks. It excels in real-time applications requiring low latency and cost-efficiency, such as chatbots, content summarization, and data processing. Ideal for enterprises needing fast, reliable outputs without compromising performance in high-throughput environments.

- AmazonBedrock Cohere Embed-English-v3: A state-of-the-art text embedding model designed to convert English text into high-dimensional vector representations, excelling in semantic understanding and scalability. Its strengths include robust performance in semantic search, clustering, and retrieval-augmented generation (RAG), making it ideal for applications like recommendation systems, document similarity analysis, and AI-driven content organization within enterprise workflows.

By the end of this tutorial, you’ll have a functional chatbot capable of answering questions based on a custom knowledge base.

Note: Since we may use proprietary models in our tutorials, make sure you have the required API key beforehand.

Step 1: Install and Set Up Haystack

import os

import requests

from haystack import Pipeline

from haystack.components.converters import MarkdownToDocument

from haystack.components.preprocessors import DocumentSplitter

from haystack.components.writers import DocumentWriter

Step 2: Install and Set Up Google Vertex AI Gemini 2.0 Flash Thinking

Using theVertexAIGeminiGenerator with Haystack requires authentication using Google Cloud Application Default Credentials (ADCs). This means your application must be set up with credentials that allow it to access Google Cloud services. If you're not sure how to configure ADCs, check the official Google documentation for setup instructions.

It's important to use a Google Cloud account that has the right permissions to access a project with Google Vertex AI endpoints. Without proper access, the generator won’t work as expected.

To find your project ID, you can either look it up in the Google Cloud Console under the resource manager or run the following command in your terminal.

Now let's install and set up this model.

pip install google-vertex-haystack

from haystack_integrations.components.generators.google_vertex import VertexAIGeminiGenerator

generator = VertexAIGeminiGenerator(model="gemini-2.0-flash-thinking-exp-01-21")

Step 3: Install and Set Up AmazonBedrock cohere embed-english-v3

Amazon Bedrock is a fully managed service that makes high-performing foundation models from leading AI startups and Amazon available through a unified API.

To use embedding models on Amazon Bedrock for text and document embedding together with Haystack, you need to initialize an AmazonBedrockTextEmbedder and AmazonBedrockDocumentEmbedderwith the model name, the AWS credentials (aws_access_key_id, aws_secret_access_key, and aws_region_name) should be set as environment variables, be configured as described above or passed as Secret arguments. Note, make sure the region you set supports Amazon Bedrock.

Now, let's start installing and setting up models with Amazon Bedrock.

pip install amazon-bedrock-haystack

import os

from haystack_integrations.components.embedders.amazon_bedrock import AmazonBedrockTextEmbedder

from haystack_integrations.components.embedders.amazon_bedrock import AmazonBedrockDocumentEmbedder

from haystack.dataclasses import Document

os.environ["AWS_ACCESS_KEY_ID"] = "..."

os.environ["AWS_SECRET_ACCESS_KEY"] = "..."

os.environ["AWS_DEFAULT_REGION"] = "us-east-1" # just an example

text_embedder = AmazonBedrockTextEmbedder(model="cohere.embed-english-v3",

input_type="search_query"

document_embedder = AmazonBedrockDocumentEmbedder(model="cohere.embed-english-v3",

input_type="search_document"

Step 4: Install and Set Up Haystack In-memory store

from haystack.document_stores.in_memory import InMemoryDocumentStore

from haystack.components.retrievers import InMemoryEmbeddingRetriever

document_store = InMemoryDocumentStore()

retriever=InMemoryEmbeddingRetriever(document_store=document_store))

Step 5: Build a RAG Chatbot

Now that you’ve set up all components, let’s start to build a simple chatbot. We’ll use the Milvus introduction doc as a private knowledge base. You can replace it your own dataset to customize your RAG chatbot.

url = 'https://raw.githubusercontent.com/milvus-io/milvus-docs/refs/heads/v2.5.x/site/en/about/overview.md'

example_file = 'example_file.md'

response = requests.get(url)

with open(example_file, 'wb') as f:

f.write(response.content)

file_paths = [example_file] # You can replace it with your own file paths.

indexing_pipeline = Pipeline()

indexing_pipeline.add_component("converter", MarkdownToDocument())

indexing_pipeline.add_component("splitter", DocumentSplitter(split_by="sentence", split_length=2))

indexing_pipeline.add_component("embedder", document_embedder)

indexing_pipeline.add_component("writer", DocumentWriter(document_store))

indexing_pipeline.connect("converter", "splitter")

indexing_pipeline.connect("splitter", "embedder")

indexing_pipeline.connect("embedder", "writer")

indexing_pipeline.run({"converter": {"sources": file_paths}})

# print("Number of documents:", document_store.count_documents())

question = "What is Milvus?" # You can replace it with your own question.

retrieval_pipeline = Pipeline()

retrieval_pipeline.add_component("embedder", text_embedder)

retrieval_pipeline.add_component("retriever", retriever)

retrieval_pipeline.connect("embedder", "retriever")

retrieval_results = retrieval_pipeline.run({"embedder": {"text": question}})

# for doc in retrieval_results["retriever"]["documents"]:

# print(doc.content)

# print("-" * 10)

from haystack.utils import Secret

from haystack.components.builders import PromptBuilder

retriever=InMemoryEmbeddingRetriever(document_store=document_store)

text_embedder = AmazonBedrockTextEmbedder(model="cohere.embed-english-v3",

input_type="search_query"

prompt_template = """Answer the following query based on the provided context. If the context does

not include an answer, reply with 'I don't know'.\n

Query: {{query}}

Documents:

{% for doc in documents %}

{{ doc.content }}

{% endfor %}

Answer:

"""

rag_pipeline = Pipeline()

rag_pipeline.add_component("text_embedder", text_embedder)

rag_pipeline.add_component("retriever", retriever)

rag_pipeline.add_component("prompt_builder", PromptBuilder(template=prompt_template))

rag_pipeline.add_component("generator", generator)

rag_pipeline.connect("text_embedder.embedding", "retriever.query_embedding")

rag_pipeline.connect("retriever.documents", "prompt_builder.documents")

rag_pipeline.connect("prompt_builder", "generator")

results = rag_pipeline.run({"text_embedder": {"text": question}, "prompt_builder": {"query": question},})

print('RAG answer:\n', results["generator"]["replies"][0])

Optimization Tips

As you build your RAG system, optimization is key to ensuring peak performance and efficiency. While setting up the components is an essential first step, fine-tuning each one will help you create a solution that works even better and scales seamlessly. In this section, we’ll share some practical tips for optimizing all these components, giving you the edge to build smarter, faster, and more responsive RAG applications.

Haystack optimization tips

To optimize Haystack in a RAG setup, ensure you use an efficient retriever like FAISS or Milvus for scalable and fast similarity searches. Fine-tune your document store settings, such as indexing strategies and storage backends, to balance speed and accuracy. Use batch processing for embedding generation to reduce latency and optimize API calls. Leverage Haystack's pipeline caching to avoid redundant computations, especially for frequently queried documents. Tune your reader model by selecting a lightweight yet accurate transformer-based model like DistilBERT to speed up response times. Implement query rewriting or filtering techniques to enhance retrieval quality, ensuring the most relevant documents are retrieved for generation. Finally, monitor system performance with Haystack’s built-in evaluation tools to iteratively refine your setup based on real-world query performance.

Haystack in-memory store optimization tips

Haystack in-memory store is just a very simple, in-memory document store with no extra services or dependencies. We recommend that you just experiment it with RAG pipeline within your Haystack framework, and we do not recommend using it for production. If you want a much more scalable solution for your apps or even enterprise projects, we recommend using Zilliz Cloud, which is a fully managed vector database service built on the open-source Milvusand offers a free tier supporting up to 1 million vectors

Google Vertex AI Gemini 2.0 Flash optimization tips

To optimize Gemini 2.0 Flash in RAG, fine-tune input chunking to balance context relevance and token limits—aim for 512–1024 tokens. Use structured queries with explicit filters (e.g., metadata, date ranges) to improve retrieval precision. Adjust temperature (0.1–0.3) and top-p (0.7–0.9) to reduce hallucination while maintaining coherence. Cache frequent retrievals to minimize latency and costs. Preprocess documents to remove noise and enhance embeddings. Test hybrid search (keyword + vector) for complex queries. Monitor token usage and response quality via Vertex AI’s logging tools for iterative tuning.

AmazonBedrock Cohere Embed-English-v3 optimization tips

To optimize Cohere Embed-English-v3 in RAG, preprocess input text by removing redundant whitespace, normalizing casing, and filtering low-relevance content to reduce noise. Use batch embedding generation for bulk documents to minimize API calls and latency. Adjust the input_type parameter (e.g., "document" or "query") to align with use cases for context-aware embeddings. Experiment with chunk sizes (e.g., 256-512 tokens) to balance semantic capture and computational efficiency. Cache frequent or static embeddings to avoid reprocessing. Monitor embedding quality via cosine similarity checks and fine-tune retrieval thresholds for your dataset.

By implementing these tips across your components, you'll be able to enhance the performance and functionality of your RAG system, ensuring it’s optimized for both speed and accuracy. Keep testing, iterating, and refining your setup to stay ahead in the ever-evolving world of AI development.

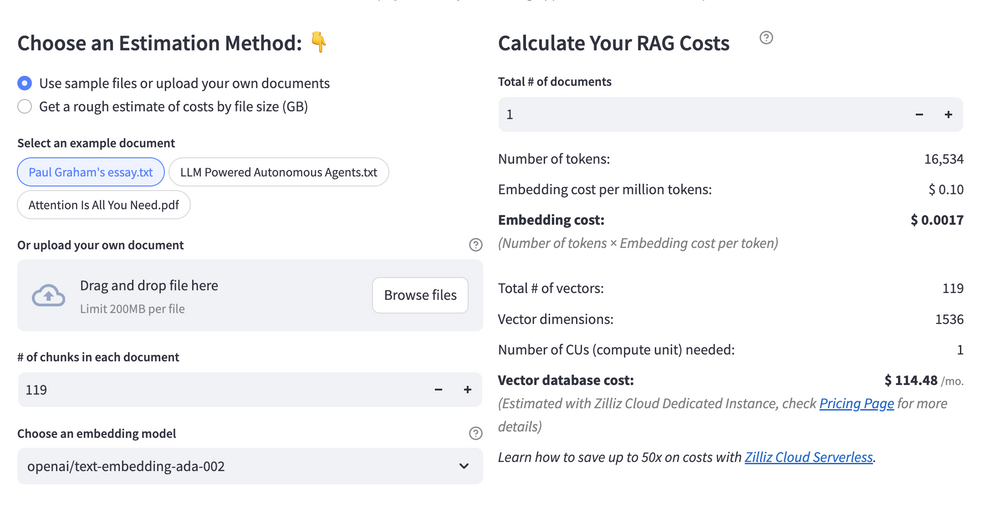

RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

Estimating the cost of a Retrieval-Augmented Generation (RAG) pipeline involves analyzing expenses across vector storage, compute resources, and API usage. Key cost drivers include vector database queries, embedding generation, and LLM inference.

RAG Cost Calculator is a free tool that quickly estimates the cost of building a RAG pipeline, including chunking, embedding, vector storage/search, and LLM generation. It also helps you identify cost-saving opportunities and achieve up to 10x cost reduction on vector databases with the serverless option.

Calculate your RAG cost

Calculate your RAG cost

What Have You Learned?

By diving into this tutorial, you’ve unlocked the power of building a RAG system from the ground up! You’ve seen how Haystack acts as the backbone, seamlessly connecting every piece of the puzzle. The Haystack In-memory Document Store became your go-to for lightning-fast data retrieval, proving that you don’t always need complex infrastructure to handle vector storage efficiently. Then came Google Vertex AI Gemini 2.0, your creative powerhouse, generating human-like responses that feel natural and insightful. Pairing it with Amazon Bedrock’s Cohere Embed-English-v3 model, you learned how to transform text into rich, meaningful embeddings—turning unstructured data into a searchable treasure trove. Together, these tools formed a dynamic RAG pipeline: ingesting data, retrieving context, and synthesizing answers with precision. And let’s not forget the bonus insights! You picked up optimization tricks like balancing chunk sizes for embeddings and tuning retrieval parameters to boost performance. The free RAG cost calculator added extra confidence, helping you estimate expenses and scale smarter.

Now it’s your turn to take this knowledge and run with it! Imagine the applications you can build—chatbots that understand nuance, search engines that feel almost psychic, or tools that democratize access to complex information. The possibilities are endless, and you’ve got everything you need to start experimenting. Don’t just stop here—tweak, iterate, and innovate. Play with different models, explore hybrid retrieval strategies, or integrate real-time data streams. Remember, every optimization you make and every line of code you write brings us closer to smarter, more intuitive AI. So fire up your IDE, embrace the trial-and-error magic, and build something that blows minds. The future of intelligent applications is in your hands—go shape it! 🚀

Further Resources

🌟 In addition to this RAG tutorial, unleash your full potential with these incredible resources to level up your RAG skills.

- How to Build a Multimodal RAG | Documentation

- How to Enhance the Performance of Your RAG Pipeline

- Graph RAG with Milvus | Documentation

- How to Evaluate RAG Applications - Zilliz Learn

- Generative AI Resource Hub | Zilliz

We'd Love to Hear What You Think!

We’d love to hear your thoughts! 🌟 Leave your questions or comments below or join our vibrant Milvus Discord community to share your experiences, ask questions, or connect with thousands of AI enthusiasts. Your journey matters to us!

If you like this tutorial, show your support by giving our Milvus GitHub repo a star ⭐—it means the world to us and inspires us to keep creating! 💖

- Introduction to RAG

- Key Components We'll Use for This RAG Chatbot

- Step 1: Install and Set Up Haystack

- Step 2: Install and Set Up Google Vertex AI Gemini 2.0 Flash Thinking

- Step 3: Install and Set Up AmazonBedrock cohere embed-english-v3

- Step 4: Install and Set Up Haystack In-memory store

- Step 5: Build a RAG Chatbot

- Optimization Tips

- RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

- What Have You Learned?

- Further Resources

- We'd Love to Hear What You Think!

anchor.title

Vector Database at Scale

Zilliz Cloud is a fully-managed vector database built for scale, perfect for your RAG apps.

Try Zilliz Cloud for Free