Nomic / nomic-embed-vision-v1.5

Task: Embedding

Modality: Image

Similarity Metric: Cosine

License: Apache 2.0

Dimensions: 768

Max Input Tokens:

Price: Free

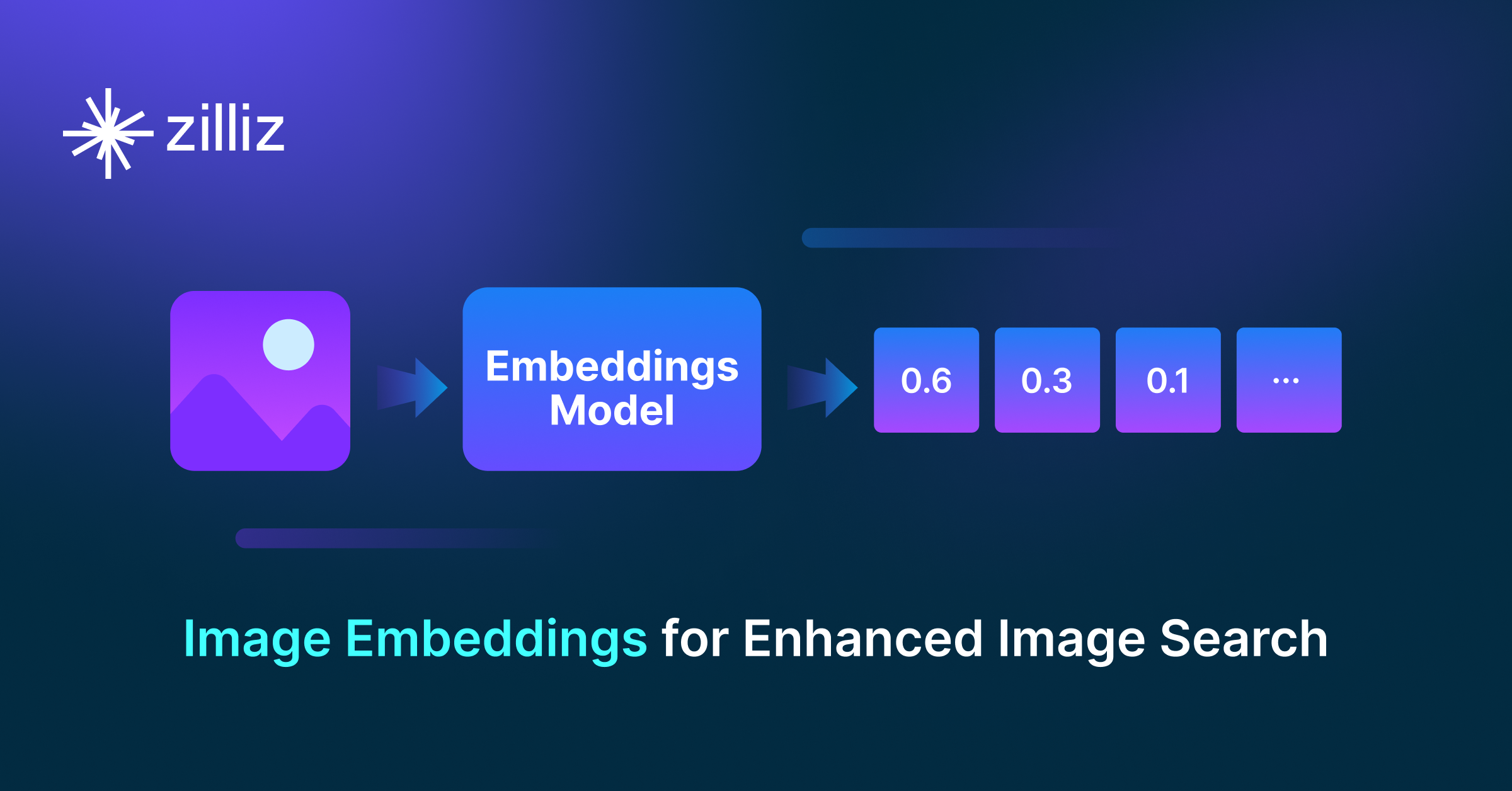

Introduction to nomic-embed-vision-v1.5

The nomic-embed-vision-v1.5 model is a fully replicable vision embedding model aligned to the latent space of nomic-embed-text-v1.5, making all text embeddings multimodal and directly compatible with image embeddings. Its 92M-parameter vision encoder enables image and text embedding, unimodal semantic search, and multimodal retrieval across both data types.

How to create embeddings with nomic-embed-vision-v1.5

There are two primary ways to generate vector embeddings:

- PyMilvus: the Python SDK for Milvus that seamlessly integrates the

nomic-embed-vision-v1.5model. - The

embedmodule in the Nomic Python SDK provides embedding functionality using the Nomic Embedding API.

Once the vector embeddings are generated, they can be stored in Zilliz Cloud (a fully managed vector database service powered by Milvus) and used for semantic similarity search. Here are four key steps:

- Sign up for a Zilliz Cloud account for free.

- Set up a serverless cluster and obtain the Public Endpoint and API Key.

- Create a vector collection and insert your vector embeddings.

- Run a semantic search on the stored embeddings.

Create embeddings via PyMilvus and insert them into Zilliz Cloud for semantic search

from pymilvus import MilvusClient

from nomic import embed

# Prepare images (can be local paths or URLs)

images = ["path/to/image1.jpg", "path/to/image2.jpg", "path/to/image3.jpg"]

# Generate embeddings for images using nomic-embed-vision-v1.5

image_embeddings = embed.image(images=images, model="nomic-embed-vision-v1.5")[

"embeddings"

]

# Prepare query image

query_images = ["path/to/query_image.jpg"]

# Generate embeddings for query

query_embeddings = embed.image(images=query_images, model="nomic-embed-vision-v1.5")[

"embeddings"

]

# Connect to Zilliz Cloud with Public Endpoint and API Key

client = MilvusClient(uri=ZILLIZ_PUBLIC_ENDPOINT, token=ZILLIZ_API_KEY)

COLLECTION = "nomic_vision_v1_5_images"

# Drop collection if it exists

if client.has_collection(collection_name=COLLECTION):

client.drop_collection(collection_name=COLLECTION)

# Create collection with dimension 768 (nomic-embed-vision-v1.5 output dimension)

client.create_collection(collection_name=COLLECTION, dimension=768, auto_id=True)

# Insert images with embeddings

for image, embedding in zip(images, image_embeddings):

client.insert(COLLECTION, {"image_path": image, "vector": embedding})

# Search for similar images

results = client.search(

collection_name=COLLECTION,

data=query_embeddings,

# consistency_level="Strong", # Strong consistency ensures accurate results but may increase latency

output_fields=["image_path"],

limit=3,

)

# Print search results

print("Similar images found:")

for result in results[0]:

print(f" - {result['entity']['image_path']} (distance: {result['distance']:.4f})")

For more information, refer to our PyMilvus Embedding Model documentation.

Seamless AI Workflows

From embeddings to scalable AI search—Zilliz Cloud lets you store, index, and retrieve embeddings with unmatched speed and efficiency.

Try Zilliz Cloud for Free