Build RAG Chatbot with Haystack, Zilliz Cloud, OpenAI GPT-o1, and BAAI llm-embedder

Introduction to RAG

Retrieval-Augmented Generation (RAG) is a game-changer for GenAI applications, especially in conversational AI. It combines the power of pre-trained large language models (LLMs) like OpenAI’s GPT with external knowledge sources stored in vector databases such as Milvus and Zilliz Cloud, allowing for more accurate, contextually relevant, and up-to-date response generation. A RAG pipeline usually consists of four basic components: a vector database, an embedding model, an LLM, and a framework.

Key Components We'll Use for This RAG Chatbot

This tutorial shows you how to build a simple RAG chatbot in Python using the following components:

- Haystack: An open-source Python framework designed for building production-ready NLP applications, particularly question answering and semantic search systems. Haystack excels at retrieving information from large document collections through its modular architecture that combines retrieval and reader components. Ideal for developers creating search applications, chatbots, and knowledge management systems that require efficient document processing and accurate information extraction from unstructured text.

- Zilliz Cloud: a fully managed vector database-as-a-service platform built on top of the open-source Milvus, designed to handle high-performance vector data processing at scale. It enables organizations to efficiently store, search, and analyze large volumes of unstructured data, such as text, images, or audio, by leveraging advanced vector search technology. It offers a free tier supporting up to 1 million vectors.

- OpenAI GPT-1: A foundational transformer-based language model designed for natural language understanding and generation. Strengths include coherent text generation, contextual comprehension, and adaptability to diverse NLP tasks. Ideal for text completion, basic conversational agents, and early-stage language research, serving as a precursor to more advanced models like GPT-3 and GPT-4.

- BAAI llm-embedder: A unified embedding model designed to enhance retrieval-augmented LLMs by generating high-quality text representations. It excels in cross-domain knowledge integration, robustness, and semantic accuracy, making it ideal for retrieval systems, question answering, and textual analysis tasks requiring precise contextual understanding and scalability.

By the end of this tutorial, you’ll have a functional chatbot capable of answering questions based on a custom knowledge base.

Note: Since we may use proprietary models in our tutorials, make sure you have the required API key beforehand.

Step 1: Install and Set Up Haystack

import os

import requests

from haystack import Pipeline

from haystack.components.converters import MarkdownToDocument

from haystack.components.preprocessors import DocumentSplitter

from haystack.components.writers import DocumentWriter

Step 2: Install and Set Up OpenAI GPT-o1

To use OpenAI models, you need to get an OpenAI API key. The Haystack integration with OpenAI models uses an OPENAI_API_KEY environment variable by default. Otherwise, you can pass an API key at initialization with api_key:

generator = OpenAIGenerator(api_key=Secret.from_token("<your-api-key>"), model="gpt-4o-mini")

Then, the generator component needs a prompt to operate, but you can pass any text generation parameters valid for the openai.ChatCompletion.create method directly to this component using the generation_kwargs parameter, both at initialization and to run() method. For more details on the parameters supported by the OpenAI API, refer to the OpenAI documentation.

Now let's install and set up OpenAI models.

from haystack.components.generators import OpenAIGenerator

generator = OpenAIGenerator(model="o1", api_key=Secret.from_token("<your-api-key>"))

Step 3: Install and Set Up BAAI llm-embedder

from haystack import Document

from haystack.components.embedders import SentenceTransformersDocumentEmbedder

from haystack.components.embedders import SentenceTransformersTextEmbedder

doc_embedder = SentenceTransformersDocumentEmbedder(model="BAAI/llm-embedder")

doc_embedder.warm_up()

text_embedder = SentenceTransformersTextEmbedder(model="BAAI/llm-embedder")

text_embedder.warm_up()

Step 4: Install and Set Up Zilliz Cloud

pip install --upgrade pymilvus milvus-haystack

from milvus_haystack import MilvusDocumentStore

from milvus_haystack.milvus_embedding_retriever import MilvusEmbeddingRetriever

document_store = MilvusDocumentStore(connection_args={"uri": ZILLIZ_CLOUD_URI, "token": ZILLIZ_CLOUD_TOKEN}, drop_old=True,)

retriever = MilvusEmbeddingRetriever(document_store=document_store, top_k=3)

Step 5: Build a RAG Chatbot

Now that you’ve set up all components, let’s start to build a simple chatbot. We’ll use the Milvus introduction doc as a private knowledge base. You can replace it your own dataset to customize your RAG chatbot.

url = 'https://raw.githubusercontent.com/milvus-io/milvus-docs/refs/heads/v2.5.x/site/en/about/overview.md'

example_file = 'example_file.md'

response = requests.get(url)

with open(example_file, 'wb') as f:

f.write(response.content)

file_paths = [example_file] # You can replace it with your own file paths.

indexing_pipeline = Pipeline()

indexing_pipeline.add_component("converter", MarkdownToDocument())

indexing_pipeline.add_component("splitter", DocumentSplitter(split_by="sentence", split_length=2))

indexing_pipeline.add_component("embedder", document_embedder)

indexing_pipeline.add_component("writer", DocumentWriter(document_store))

indexing_pipeline.connect("converter", "splitter")

indexing_pipeline.connect("splitter", "embedder")

indexing_pipeline.connect("embedder", "writer")

indexing_pipeline.run({"converter": {"sources": file_paths}})

# print("Number of documents:", document_store.count_documents())

question = "What is Milvus?" # You can replace it with your own question.

retrieval_pipeline = Pipeline()

retrieval_pipeline.add_component("embedder", text_embedder)

retrieval_pipeline.add_component("retriever", retriever)

retrieval_pipeline.connect("embedder", "retriever")

retrieval_results = retrieval_pipeline.run({"embedder": {"text": question}})

# for doc in retrieval_results["retriever"]["documents"]:

# print(doc.content)

# print("-" * 10)

from haystack.utils import Secret

from haystack.components.builders import PromptBuilder

retriever = MilvusEmbeddingRetriever(document_store=document_store, top_k=3)

text_embedder = SentenceTransformersTextEmbedder(model="BAAI/llm-embedder")

text_embedder.warm_up()

prompt_template = """Answer the following query based on the provided context. If the context does

not include an answer, reply with 'I don't know'.\n

Query: {{query}}

Documents:

{% for doc in documents %}

{{ doc.content }}

{% endfor %}

Answer:

"""

rag_pipeline = Pipeline()

rag_pipeline.add_component("text_embedder", text_embedder)

rag_pipeline.add_component("retriever", retriever)

rag_pipeline.add_component("prompt_builder", PromptBuilder(template=prompt_template))

rag_pipeline.add_component("generator", generator)

rag_pipeline.connect("text_embedder.embedding", "retriever.query_embedding")

rag_pipeline.connect("retriever.documents", "prompt_builder.documents")

rag_pipeline.connect("prompt_builder", "generator")

results = rag_pipeline.run({"text_embedder": {"text": question}, "prompt_builder": {"query": question},})

print('RAG answer:\n', results["generator"]["replies"][0])

Optimization Tips

As you build your RAG system, optimization is key to ensuring peak performance and efficiency. While setting up the components is an essential first step, fine-tuning each one will help you create a solution that works even better and scales seamlessly. In this section, we’ll share some practical tips for optimizing all these components, giving you the edge to build smarter, faster, and more responsive RAG applications.

Haystack optimization tips

To optimize Haystack in a RAG setup, ensure you use an efficient retriever like FAISS or Milvus for scalable and fast similarity searches. Fine-tune your document store settings, such as indexing strategies and storage backends, to balance speed and accuracy. Use batch processing for embedding generation to reduce latency and optimize API calls. Leverage Haystack's pipeline caching to avoid redundant computations, especially for frequently queried documents. Tune your reader model by selecting a lightweight yet accurate transformer-based model like DistilBERT to speed up response times. Implement query rewriting or filtering techniques to enhance retrieval quality, ensuring the most relevant documents are retrieved for generation. Finally, monitor system performance with Haystack’s built-in evaluation tools to iteratively refine your setup based on real-world query performance.

Zilliz Cloud optimization tips

Optimizing Zilliz Cloud for a RAG system involves efficient index selection, query tuning, and resource management. Use Hierarchical Navigable Small World (HNSW) indexing for high-speed, approximate nearest neighbor search while balancing recall and efficiency. Fine-tune ef_construction and M parameters based on your dataset size and query workload to optimize search accuracy and latency. Enable dynamic scaling to handle fluctuating workloads efficiently, ensuring smooth performance under varying query loads. Implement data partitioning to improve retrieval speed by grouping related data, reducing unnecessary comparisons. Regularly update and optimize embeddings to keep results relevant, particularly when dealing with evolving datasets. Use hybrid search techniques, such as combining vector and keyword search, to improve response quality. Monitor system metrics in Zilliz Cloud’s dashboard and adjust configurations accordingly to maintain low-latency, high-throughput performance.

OpenAI GPT-01 optimization tips

To optimize OpenAI GPT-01 in a RAG setup, fine-tune prompts to include explicit instructions and structured context (e.g., “Answer using: [retrieved text]”). Limit response length with max_tokens to reduce verbosity and cost. Use a lower temperature (0.2–0.5) for factual accuracy. Preprocess retrieved documents to remove irrelevant content, ensuring inputs fit token limits. Cache frequent queries to minimize API calls. Experiment with chunking strategies for context injection and prioritize critical information at the prompt’s start or end. Monitor latency and adjust batch sizes for throughput efficiency.

BAAI llm-embedder optimization tips

Optimize BAAI llm-embedder in RAG by normalizing input text (lowercasing, removing special characters) to reduce noise, batching inference for efficiency, and fine-tuning on domain-specific data if labeled examples are available. Use dynamic truncation or padding to handle variable-length inputs, and cache frequent queries to minimize recomputation. Experiment with pooling strategies (e.g., CLS token vs. mean-pooling) for optimal semantic capture. Regularly evaluate retrieval accuracy via recall@k metrics and consider hybrid retrieval (dense + sparse) to balance precision and coverage. Monitor latency and memory usage to scale effectively.

By implementing these tips across your components, you'll be able to enhance the performance and functionality of your RAG system, ensuring it’s optimized for both speed and accuracy. Keep testing, iterating, and refining your setup to stay ahead in the ever-evolving world of AI development.

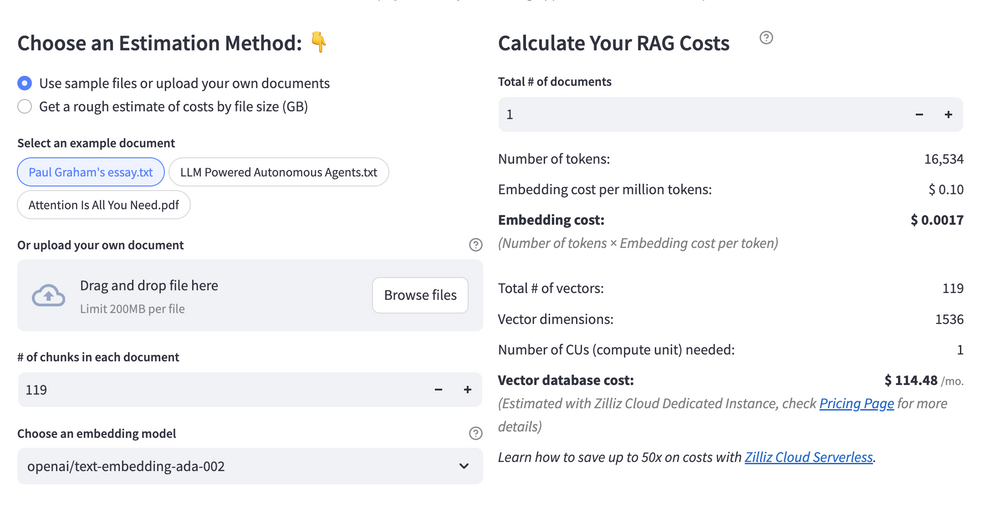

RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

Estimating the cost of a Retrieval-Augmented Generation (RAG) pipeline involves analyzing expenses across vector storage, compute resources, and API usage. Key cost drivers include vector database queries, embedding generation, and LLM inference.

RAG Cost Calculator is a free tool that quickly estimates the cost of building a RAG pipeline, including chunking, embedding, vector storage/search, and LLM generation. It also helps you identify cost-saving opportunities and achieve up to 10x cost reduction on vector databases with the serverless option.

Calculate your RAG cost

Calculate your RAG cost

What Have You Learned?

By diving into this tutorial, you’ve unlocked the power of building a modern RAG system from the ground up—combining the flexibility of Haystack as your orchestration framework, the speed and scalability of Zilliz Cloud as your vector database, the creative intelligence of OpenAI GPT-01 as your LLM, and the precision of BAAI llm-embedder as your embedding model. You’ve seen firsthand how these components work in harmony: Haystack streamlines the pipeline setup, Zilliz Cloud stores and retrieves vectors at lightning speed, BAAI’s embeddings turn raw data into meaningful numerical representations, and GPT-01 generates human-like responses that feel natural and context-aware. Together, they form a seamless workflow where data flows from ingestion to insight, empowering you to build applications that don’t just answer questions but understand them deeply. Plus, you’ve picked up pro tips for optimizing performance, like tweaking chunk sizes for embeddings or balancing latency with accuracy—small adjustments that make a big difference. And don’t forget that free RAG cost calculator you explored! It’s your secret weapon for budgeting smarter and scaling confidently.

Now that you’ve seen how these pieces fit together, the real magic begins—your magic. You’ve got the tools to create RAG systems that revolutionize how people interact with information, whether you’re building chatbots, research assistants, or custom enterprise solutions. Experiment with different datasets, fine-tune your models, and let Zilliz Cloud handle the heavy lifting so you can focus on innovation. Remember, every line of code you write brings you closer to solving real-world problems in ways that were once sci-fi. So fire up your IDE, tweak those parameters, and start building something awesome. The future of intelligent applications isn’t just coming—it’s already here, and you’re the one holding the keys. Let’s go make it happen! 🚀

Further Resources

🌟 In addition to this RAG tutorial, unleash your full potential with these incredible resources to level up your RAG skills.

- How to Build a Multimodal RAG | Documentation

- How to Enhance the Performance of Your RAG Pipeline

- Graph RAG with Milvus | Documentation

- How to Evaluate RAG Applications - Zilliz Learn

- Generative AI Resource Hub | Zilliz

We'd Love to Hear What You Think!

We’d love to hear your thoughts! 🌟 Leave your questions or comments below or join our vibrant Milvus Discord community to share your experiences, ask questions, or connect with thousands of AI enthusiasts. Your journey matters to us!

If you like this tutorial, show your support by giving our Milvus GitHub repo a star ⭐—it means the world to us and inspires us to keep creating! 💖

- Introduction to RAG

- Key Components We'll Use for This RAG Chatbot

- Step 1: Install and Set Up Haystack

- Step 2: Install and Set Up OpenAI GPT-o1

- Step 3: Install and Set Up BAAI llm-embedder

- Step 4: Install and Set Up Zilliz Cloud

- Step 5: Build a RAG Chatbot

- Optimization Tips

- RAG Cost Calculator: A Free Tool to Calculate Your Cost in Seconds

- What Have You Learned?

- Further Resources

- We'd Love to Hear What You Think!

anchor.title

Vector Database at Scale

Zilliz Cloud is a fully-managed vector database built for scale, perfect for your RAG apps.

Try Zilliz Cloud for Free