Notion's Vector Search Is Excellent. Their Next Problem Is Harder.

Reading "Two Years of Vector Search at Notion" — and what it predicts about what comes next

Notion published an engineering post covering two years of vector search infrastructure. I read it with genuine admiration and a strong sense of deja vu. Almost every issue they describe, migration they executed, and workaround they built has a close parallel in big data history. Big data took roughly fifteen years to converge on its long-term architecture.

What struck me most was not just the quality of execution. It was how much effort went into platform-level concerns that, in a more complete architecture, should live in infrastructure rather than in product engineering.

If I had to guess the title of their next engineering post, it might be "How we spent six months on an embedding model upgrade." Or "How we migrated a hundred million workspaces to a new vector space without downtime." Or "How we built offline context engineering to give Notion AI durable memory."

Everything solved so far is Chapter 1. Chapter 2 is harder.

What Notion Built

The story starts in November 2023. Notion launched AI Q&A and almost immediately accumulated a waitlist of millions of workspaces. Their vector database ran on pod clusters — storage and compute bundled together, sharded by workspace ID. Within a month, they were approaching capacity limits.

Rather than resharding under live traffic, they took the pragmatic path: spin up new index clusters tagged with a generation ID, route new workspaces to fresh generations, and leave existing workspaces in place. Combined with Spark and Airflow tuning, they cleared the waitlist in four months. Daily onboarding capacity went up 600x.

Clean engineering under pressure. The cost: multi-generation routing logic that would follow them for two years.

Then two major migrations:

May 2024 — pod architecture to serverless. Storage and compute are decoupled. Costs dropped 50% immediately, and the generation routing complexity dissolved with it — because capacity was no longer a finite resource that had to be planned in advance.

Late 2024 through early 2025 — from their serverless provider to turbopuffer. Their data was stored in a vendor's proprietary storage system, so switching required a full re-index. They used the opportunity to upgrade their embedding model as well.

Mid-2025: they shipped Page State. Every text span is hashed with xxHash (64-bit) and stored in DynamoDB. When a page updates, the system compares hashes before deciding whether to re-embed. If only metadata changed, it patches the vector database directly without recomputing the vector.

Shortly after, embedding generation moved from Spark + external API to self-hosted models on Ray via Anyscale. CPU preprocessing and GPU inference now run in a single pipeline, eliminating the S3 handoff between systems.

Two years. Five major bets. Costs down 90% from peak. It's a genuinely impressive run.

This Is a Story Big Data Already Told

At each step, I kept wanting to annotate with a timestamp: the big data ecosystem solved this in [year]. It is not a coincidence. The same constraints produce the same architectural moves.

1. Storage-Compute Separation and Serverless: Paying for Work, Not for Existence

Notion's two cost migrations — from pod clusters to serverless, then to an object-storage-backed serverless setup — can be read as one architectural move: stop paying for infrastructure existence and start paying for actual work. Whether a workspace was actively queried or idle for weeks, the old model kept clusters running and billing.

Seen this way, the key shift is decoupling storage from compute: indexes live in object storage, cold collections hold almost no compute, hot collections load on demand, and spend tracks real usage much more closely. In big data, this was not just an HDFS-to-S3 swap; it was a broader move from storage-compute integration to storage-compute separation.

The sequence mattered: first, independent scaling solved the pain of elasticity and capacity planning; then, ephemeral compute plus persistent object storage reduced usage costs. Hadoop's default deployment co-located storage and compute for data locality, which made independent scaling hard. Once data moved to S3, Spark clusters could spin up per job and shut down afterward while storage remained persistent.

The trade-off is also structural. Object storage access sets a floor on cold-start latency: multiple GET requests, deserialization, and index reconstruction. This is not a parameter-tuning issue so much as a storage-physics issue. For Notion's current workload, where most workspaces are queried infrequently, this is a good trade-off. But as AI usage deepens, two limits become harder to ignore: large tenants with sustained high query volume, and users returning to cold workspaces where tail latency becomes user-visible. At that point, single-tier S3-native caching is often insufficient; keeping warm data on local SSDs becomes materially more important.

2. Lambda Architecture: Real-Time Is the Need, Fragmentation Is the Cost

Notion's indexing system runs two paths: an offline Spark pipeline for large-scale backfill, and Kafka consumers for real-time updates. This is a classic Lambda setup.

If you care about data freshness, this design is a natural starting point. The problem is what happens later: the same logic starts to spread across multiple systems, and teams spend more time keeping paths consistent.

In Notion's case, that split now spans Spark, Kafka consumers, DynamoDB for Page State, Ray for embedding, and a separate serving layer.

Each component is doing the right job. The hidden tax is the integration surface:

- more glue code

- more state handoff

- more chances for drift between offline and online behavior

A lot of this is amplified by closed storage for serving. Provider migration means full re-indexing. Partial update support means external tracking tables. Offline improvements do not help online retrieval until another synchronization step completes.

Kappa's core is simple: one processing model. The stronger form is One Engine - batch and streaming as two modes of one system, not two systems glued together.

That is exactly where Lakebase fits. It brings this One Engine idea to a lake-native foundation, bridging the OLTP/OLAP gap so that real-time updates, online serving, and offline processing run on a single table.

3. The Missing Layer: A Declarative Interface for Vector Operations

The move from Spark + an external embedding API to a unified Ray pipeline is the right call — collapsing two systems that communicated via S3 into a single compute graph is a real simplification, and the projected 90%+ reduction in embedding costs reflects a genuine architectural improvement. But it's a simplification at the infrastructure wiring level, and what's still missing is one at the semantic level.

In the Hadoop era, getting anything done with data meant writing MapReduce jobs: you had to understand the Mapper and Reducer lifecycle, how the shuffle worked, how to handle failures, and how to chain stages. Hive added a declarative layer — you express what result you want in SQL, and the system figures out how to turn that into MapReduce execution. That shift didn't just make things faster; it changed who could work with data at all, and it made the engineering cost of a new data operation much closer to the cost of writing a query than to the cost of writing a distributed system.

However, vector and unstructured data don't have this layer yet.

- If you want to deduplicate a billion-vector corpus before a model training run, you write Spark jobs, choose the right distance computation operators, manage output formats, and figure out how to feed results back to wherever your serving layer lives.

- If you want to upgrade from one embedding model to a newer one across hundreds of millions of documents, you build a backfill pipeline, manage old and new embeddings coexisting during the cutover, and clean up afterward — and this process is a multi-month engineering project rather than a routine operation.

- If you want to maintain compressed user memory across sessions, you design the compression logic, manage versioned writes, and wire the output into the retrieval path by hand. Every one of these is a systems engineering project rather than a data operation.

The declarative equivalent would be to express intent — "deduplicate this collection with cosine tolerance 0.05 and write results back," "backfill embeddings using this new model," "compress interaction history older than 90 days into dense memory representations" — and have the system handle execution planning, resource management, and consistency. That's the layer that makes Context Engineering tractable at scale rather than a custom engineering project each time. And it is still largely missing from current vector infrastructure stacks, regardless of serving backend.

| Notion's decision | Architectural pattern |

|---|---|

| Pod clusters → serverless → object-storage-backed serverless | Storage-compute separation: lifecycle decoupling applied to vector indexes |

| Dual-path indexing + Page State + generation routing | Real-time-first Lambda design: fast freshness, but rising sync cost across online/offline paths |

| Spark + S3 handoff → Ray | Collapsing inter-system I/O — but the declarative interface layer above it is still missing |

Big data took 15 years to converge on an architecture in which storage, compute, and processing semantics were cleanly separated and composable. Notion has moved through most of that arc in two years, which is genuinely impressive. The piece that hasn't landed yet is the one that would make the next two years substantially cheaper to build.

But This Is Only Chapter One

Everything Notion has solved is the infrastructure for one AI feature.

All of this engineering — generation routing, provider migrations, Page State, Ray compute unification — exists to make AI Q&A work well, cheaply, and reliably. They've done that. The infrastructure for this one feature is now mature. Notion is not going to ship one AI feature.

Three Problems I Expect in Their Next Engineering Post

Problem 1: The ceiling of serverless + cold/hot separation at scale

Notion's current choice is a good trade-off for its current workload: millions of workspaces, most of which are queried infrequently, where S3-native economics are strong. The limit is that performance ceilings here are set more by object-storage behavior than by tunable software knobs.

S3 first-byte latency is often in the 10-100ms range. A cold index may require multiple GET requests and deserialization before it is queryable. In production, cold-query p99 can reach multi-second ranges. That's usually not a model problem; it's index warmup.

The main pain points are predictable.

- First, large tenants with high, sustained query volume start to feel the limits of single-tier caching.

- Second, users returning to cold workspaces can see noticeable latency spikes. Multi-tier caching (memory + local SSD + object storage) changes this tail shape in ways single-tier designs usually cannot.

The billing model can also surprise teams: charging by namespace size rather than query workload means large workspaces can look expensive even when individual queries scan small subsets. In skewed multi-tenant distributions, spend can diverge from query-level intuition.

Filter recall is another latent issue for ANN-plus-post-filter approaches. When filters are narrow — page type, collaborator, time range — candidate pools can become too small, leading to a drop in recall. As Notion adds more filtered AI retrieval paths, this trade-off tends to show up more often.

So yes, the current trade-off is good. The limits are just clear: large tenants and cold-query latency become the first cracks.

Problem 2: The real-time/offline split keeps getting more expensive

Notion's current stack: Spark + Airflow for offline batch processing, Kafka consumers for real-time updates, Ray for embedding, Turbopuffer for queries. Four systems, each doing its job well, all operating on the same underlying data — users' page content.

This is the data silo structure in its classic form. The same source data is maintained as multiple views by different systems, with application-layer logic responsible for keeping them in sync. Page State's xxHash + DynamoDB exists specifically to maintain consistency between the batch-processing view and the real-time view. That logic is correct, but its complexity scales linearly with the number of AI features — each new feature adds another set of synchronization logic that has to be maintained across the real and offline paths.

The deeper problem: offline processing can't directly improve online serving without a synchronization step. If Notion's offline pipeline discovers strong semantic relationships between documents in a workspace, those relationships can't improve AI Q&A retrieval until the results are written somewhere the online query logic can read. There's a system boundary in between, with latency and consistency risk attached.

This is what makes the data flywheel hard to spin: signals generated by online usage can't continuously improve offline models without crossing a system boundary in both directions.

The architectural answer is OneData: online serving and offline processing share the same underlying table. No multiple views to synchronize, no application-layer consistency logic. One Iceberg table, different compute modes, reading and writing to the same place. The offline layer makes the online layer smarter; the online layer generates signals that the offline layer processes. The flywheel runs on a single foundation.

Problem 3: Offline context engineering — where the real moat gets built

Notion AI today: user asks a question → retrieve relevant chunks in real time → feed to LLM → return answer. The quality ceiling on this pipeline is determined by what's in the index.

The gap that offline context engineering fills is not retrieval quality at query time — it's the quality of what you've built before the query arrives.

Memory. Every conversation with Notion AI contains a signal: what the user cares about, how they work, what knowledge matters to them. Vectorizing that signal and organizing it into retrievable user memory is straightforward. Maintaining it is not: old memories need compression (a conversation from six months ago needs to become a denser representation), stale memories need updating (when new content contradicts old records), and related memories need to be organized hierarchically. All of this is continuous offline batch processing over vector data. It can't be done at query time.

Precomputed knowledge graphs. Rather than searching "which documents are relevant to this query" fresh every time, offline processing can build a model of the workspace's semantic topology — which documents address the same concepts, which decisions have dependencies, how ideas have evolved across time. That model becomes the context the AI already has, so it's reasoning from an understanding of your workspace rather than doing a fresh search from scratch.

The data flywheel. Which retrieval results got used? Which questions keep coming up without satisfying answers? Which documents get referenced repeatedly across different queries? These signals, processed offline, can continuously tune retrieval — adjusting chunking strategies, surfacing knowledge that keeps proving relevant, and identifying blind spots. The flywheel only turns if there's infrastructure to translate signals into improvements in the vector layer. Without that, the signals are just bytes in a log file.

Notion sits on one of the most interesting AI datasets in existence: structured knowledge, deliberately organized, across tens of millions of workspaces. That's an extraordinary raw material. But a data moat isn't built by having data — it's built by having infrastructure that continuously turns data into capability. Their current vector infrastructure is designed for online serving. Memory maintenance, knowledge graph precomputation, signal processing flywheels — these are large-scale batch problems that don't have a natural home in the current architecture.

Lakebase Positioning

At this point, the pattern is clear. The next bottleneck is not a single query engine decision. It is the gap between real-time serving and offline processing.

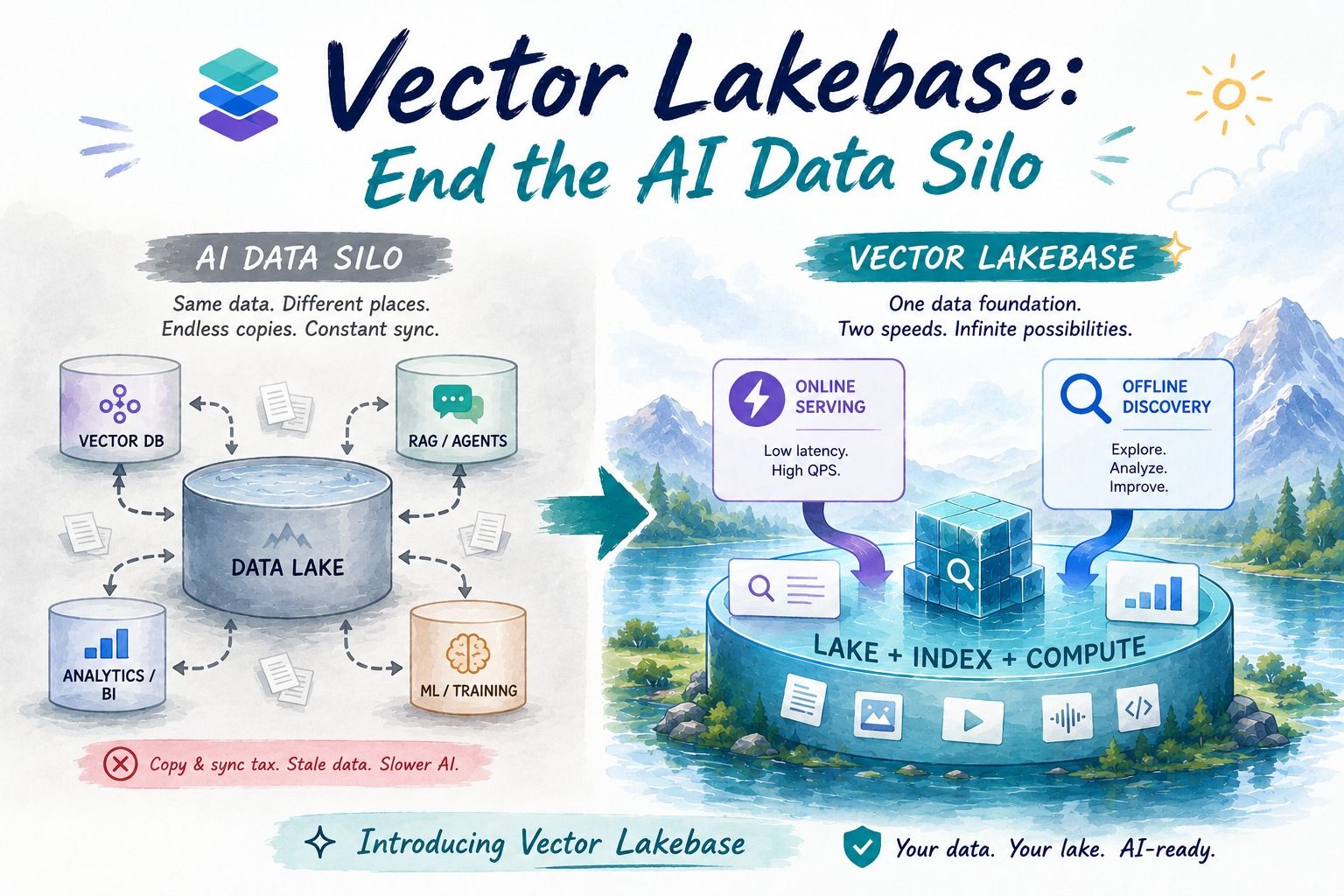

Vector Lakebase is positioned as the bridge:

One Unified Platform for OLTP and OLAP: real-time serving, iterative discovery, and batch analytics run on the same lake-native data foundation and one source of truth.

One incremental flow instead of dual-path sync. Change capture, re-embedding, and re-indexing become data-layer capabilities, not application glue code.

One place where offline intelligence lands online. Memory compression, backfills, and quality signals write back to the same table and improve serving directly.

That is the practical Chapter 2: not just better search, but a unified operating model for vector data.

Vector Lakebase is Zilliz Cloud's answer to Chapter 2: unified vector storage, processing, and serving on your existing data lake. No migration required. Stay tuned.

Keep Reading

Vector Lakebase: End the AI Data Silo

Learn how Vector Lakebase unifies vector search, data lakes, and AI data operations so teams can serve RAG and agents without copy-and-sync pipelines.

Zilliz Cloud Now Available in Azure North Europe: Bringing AI-Powered Vector Search Closer to European Customers

The addition of the Azure North Europe (Ireland) region further expands our global footprint to better serve our European customers.

What is the K-Nearest Neighbors (KNN) Algorithm in Machine Learning?

KNN is a supervised machine learning technique and algorithm for classification and regression. This post is the ultimate guide to KNN.