Introducing Functions and Model Inference on Zilliz Cloud: Automatic Embedding and Reranking with Hosted Models

AI search pipelines built on vector databases usually require you to generate embeddings yourself, insert them into the vector database for similarity retrieval, embed every query the same way, and bolt on a separate reranking service if you want better result quality. It works, but it means more glue code and more places for things to drift.

Today, we're announcing Functions and Inference Services on Zilliz Cloud — now in Public Preview for third-party models and Private Preview for Zilliz Hosted Models. You can insert raw text and search with natural language. Then Zilliz Cloud handles embedding generation, vector storage, and result reranking automatically.

What Are Functions and Inference Services on Zilliz Cloud?

A Function is a declarative operation attached to a collection that tells Zilliz Cloud how to process your data. Instead of sending vectors, you just need to send raw text now. Instead of embedding queries client-side, you send text queries directly. Then Zilliz Cloud handles the rest.

Functions fall into two categories:

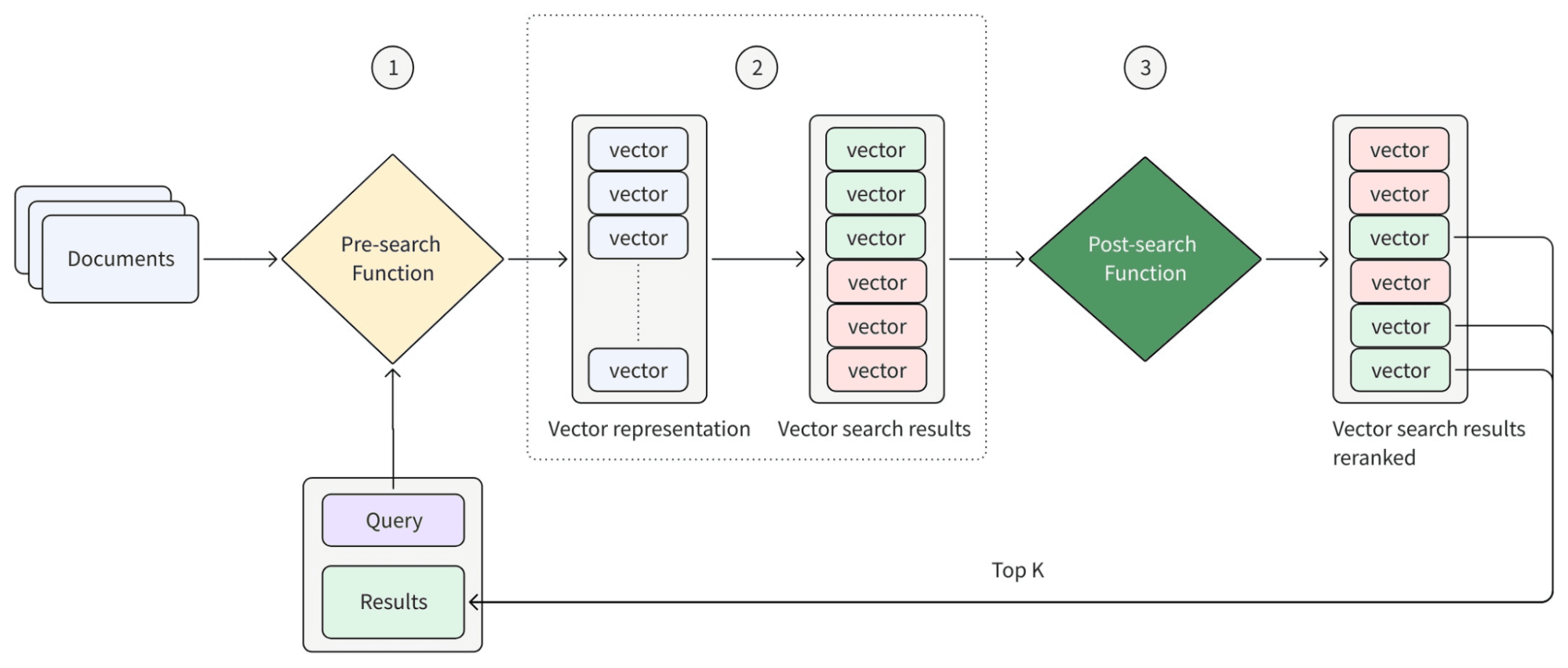

- Pre-search Functions run at ingestion and query time, converting text into searchable representations. This includes BM25 for full-text keyword search (no model required) and model-based approaches that produce dense embeddings for semantic search.

- Post-search Functions run after retrieval, refining, and reordering results. This includes hybrid rankers that merge multiple result sets, rule-based rankers for business logic, and model-based rankers that score relevance between queries and documents.

The following diagram provides an abstraction of how Functions work in the search workflow.

Inference Services power the model-based Functions. When a Function needs to generate an embedding or score a query-document pair, it calls a model from one of two sources:

| Source | How It Works |

|---|---|

| Third-party providers (OpenAI, Voyage AI, Cohere) | You bring your API key. Zilliz Cloud manages the integration. |

| Zilliz Hosted Models | Fully managed model instances on Zilliz's GPU infrastructure. Your data never leaves the platform. |

The simplest distinction: Functions define what happens to your data. Inference Services define which model does the work.

Why Move Embedding and Reranking Into Zilliz Cloud?

If you're calling an embedding API and inserting vectors into Zilliz Cloud today, that already works. But as applications scale, several friction points emerge.

Model Consistency Becomes Your Problem

Your ingestion path and query path must use the exact same model. If they drift — say, a deployment updates one side but not the other — search quality degrades silently. With Functions, the collection owns the model configuration. Ingestion and query are guaranteed to match.

Reranking Gets Skipped Because It's Too Much Friction

Model-based reranking meaningfully improves result quality, especially for hybrid search. But adding another service call after every query — with its own API key, latency budget, and failure handling — is enough friction that many teams ship without it. When reranking is a built-in Function, that friction disappears.

Credentials Sprawl Across Services

Every service that writes or searches data needs your embedding provider's API key. With Functions, credentials live in Zilliz Cloud's Model Provider Integration — one place to manage, one place to rotate keys, no secrets in application code.

Data Leaves Your Network on Every Inference Call

For teams with privacy or compliance requirements, sending raw text to an external API on every insert and query is a real concern. Hosted Models keep everything — data, inference, storage, search — within Zilliz's private network.

What's Available in Public Preview

Model-Based Embedding Functions

Attach an embedding model to a collection. From that point on:

- Insert raw text via Insert, Upsert, or Import — Zilliz Cloud generates and stores dense vector embeddings automatically.

- Search with text — the system embeds your query with the same model and runs ANN search.

No client-side embedding code. No model consistency worries. Your application just works with text.

Model-Based Reranking Functions

Select a reranking model and apply it as a built-in post-search step. This is especially powerful for hybrid search, where you combine semantic and keyword retrieval into one result set.

Model-based rerankers go beyond vector similarity — they read the content of each candidate and evaluate how well it actually answers the query. It's the difference between "these vectors are nearby" and "this document answers the question."

Supported Providers

| Provider | Embedding | Reranking |

|---|---|---|

| OpenAI | Yes | -- |

| Voyage AI | Yes | Yes |

| Cohere | Yes | Yes |

Model Provider Integration

Register your third-party API credentials once in the Zilliz Cloud console via Model Provider Integration. Collections reference the integration by ID — no keys in code. Rotate credentials in one place; every collection using that integration picks up the change automatically.

What's in Private Preview: Hosted Models

For teams where latency, cost, or data residency is a priority, Hosted Models run fully managed model instances on Zilliz's GPU infrastructure. The architectural difference: instead of sending data to an external API, the model runs right next to your data.

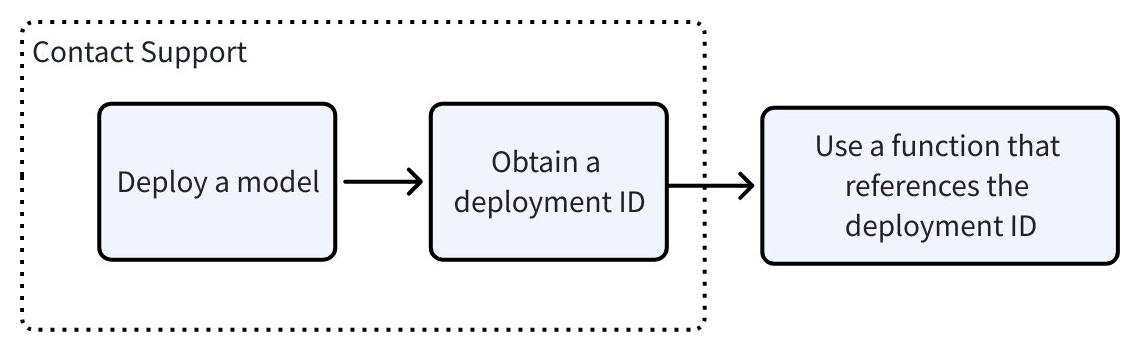

The following diagram shows the procedures for using hosted models.

| Benefit | What It Means |

|---|---|

| Zero data transfer fees | Inference happens within the Zilliz network |

| Lower latency | No external round-trip for embedding or reranking |

| Enhanced privacy | Raw text never leaves the Zilliz environment |

| Dedicated resources | No noisy-neighbor performance issues |

Available Models

| Category | Models |

|---|---|

| Embedding | Qwen3-Embedding (0.6B, 4B, 8B), BAAI BGE series (small, base, large — EN & ZH) |

| Reranking | Qwen3-Reranker (0.6B, 4B, 8B), BAAI BGE Reranker (base, large) |

| Semantic Highlighter | zilliz/semantic-highlight-bilingual-v1 — highlights relevant text segments in results |

Hosted Models are available by request. Contact the Zilliz team to get access.

Full Function and Inference Capabilities at a Glance

Pre-Search Functions

| Function | Description | Status |

|---|---|---|

| BM25 | Sparse embeddings for full-text keyword search — no model required | GA |

| Model-Based Embedding (3rd-party) | Dense embeddings via OpenAI, Voyage AI, Cohere | Public Preview |

| Model-Based Embedding (Hosted) | Dense embeddings via Zilliz-hosted Qwen3, BGE | Private Preview |

Post-Search Functions

| Function | Description | Status |

|---|---|---|

| Hybrid Rankers | Merge results from multiple retrieval strategies (e.g., semantic + keyword) | GA |

| Rule-Based Rankers | Apply business logic — recency, popularity, custom scores | GA |

| Model-Based Rankers (3rd-party) | Semantic reranking via Voyage AI, Cohere | Public Preview |

| Model-Based Rankers (Hosted) | Semantic reranking via Zilliz-hosted Qwen3, BGE | Private Preview |

BM25, hybrid rankers, and rule-based rankers have been generally available. Today's release adds model-powered intelligence for both embedding and ranking — plus the infrastructure to run those models through third-party APIs or directly on Zilliz Cloud.

How to Get Started with Zilliz Cloud Functions

Public Preview (available now):

- Sign up or sign in to Zilliz Cloud — new accounts registered with a work email get $100 in free credits

- Set up a Model Provider Integration in the console

- Create a collection with an embedding function

- Insert raw text and search with text — that's it

Private Preview (by request):

Contact us to try Hosted Models with dedicated inference.

Full documentation: Function and Model Inference Guide

Frequently Asked Questions

A few questions that come up around embedding, reranking, and managed inference for vector search:

Can a vector database generate embeddings automatically?

Yes. With Zilliz Cloud Functions, you attach an embedding model to a collection and insert raw text — the database generates and stores dense vector embeddings on your behalf. Queries work the same way: send a text query, and the system embeds it with the same model before running ANN search. This eliminates client-side embedding code and guarantees model consistency between ingestion and search.

What is model-based reranking, and how does it improve vector search?

Model-based reranking is a post-retrieval step where a language model evaluates how well each candidate document actually answers the query — rather than relying solely on vector similarity scores. It's especially effective for hybrid search pipelines that combine keyword and semantic retrieval. On Zilliz Cloud, you can apply model-based reranking as a built-in Function using providers like Voyage AI or Cohere, or through Zilliz Hosted Models.

What is the difference between hosted and third-party embedding models?

Third-party models (OpenAI, Voyage AI, Cohere) run on the provider's infrastructure — you supply an API key and pay per call. Hosted Models run on Zilliz-managed GPU infrastructure, so your data never leaves the platform. Hosted Models offer lower latency, zero data transfer fees, and dedicated compute with no noisy-neighbor issues. The tradeoff: third-party pay-per-call may be cheaper at low volume, while hosted instances are more cost-effective at scale.

How do you combine keyword search and semantic search in one query?

On Zilliz Cloud, you can attach both a BM25 Function (for keyword search via sparse embeddings) and a model-based embedding Function (for semantic search via dense embeddings) to the same collection. At query time, a hybrid ranker or model-based reranker merges the results into a single ranked list. The collection handles sparse embeddings, dense embeddings, and reranking together — no external orchestration needed.

Keep Reading

Data Deduplication at Trillion Scale: How to Solve the Biggest Bottleneck of LLM Training

Explore how MinHash LSH and Milvus handle data deduplication at the trillion-scale level, solving key bottlenecks in LLM training for improved AI model performance.

DeepSeek-VL2: Mixture-of-Experts Vision-Language Models for Advanced Multimodal Understanding

Explore DeepSeek-VL2, the open-source MoE vision-language model. Discover its architecture, efficient training pipeline, and top-tier performance.

Why DeepSeek V3 is Taking the AI World by Storm: A Developer’s Perspective

Explore how DeepSeek V3 achieves GPT-4 level performance at fraction of the cost. Learn about MLA, MoE, and MTP innovations driving this open-source breakthrough.