Exploring LLM-Driven Agents in the Age of AI

In the dynamic realm of Artificial Intelligence (AI), the spotlight shines on groundbreaking technologies such as Large Language Models (LLMs), intelligent agents, and vector databases, captivating scientists, researchers, and enthusiasts worldwide. The LLM-driven Agent stands at the forefront of this innovation, a concept championed by notable figures like Andrej Karpathy and Lilian Weng from OpenAI. This advancement is reshaping our understanding of intelligent systems and redefining the boundaries of what AI can achieve.

This post will delve into this remarkable tech stack, exploring its architecture and functionalities and weighing its merits and limitations.

What is an AI Agent?

LLMs excel at understanding and responding to prompts. Agents are AI systems that elevate this capability by enabling LLMs to make decisions and take action autonomously. In simple terms, Agents are like a fusion of LLM chains and tools.

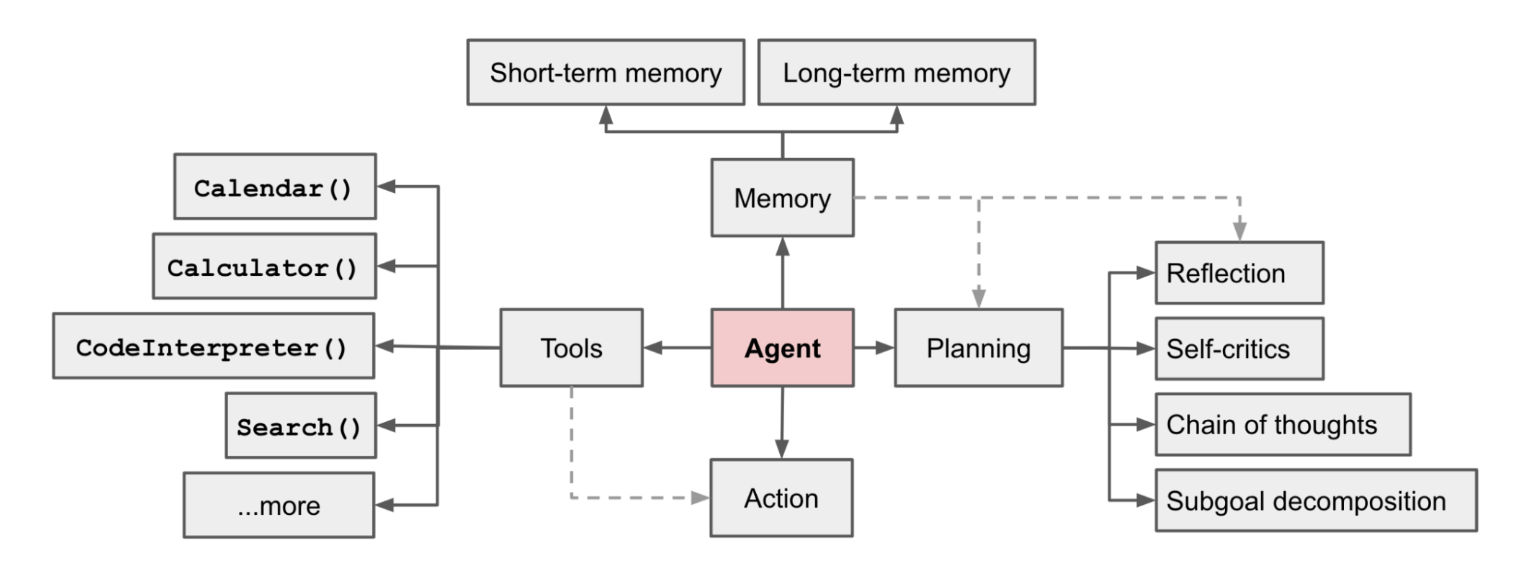

The Agent Architecture by Lilian Weng, OpenAI’s scientist

The Agent Architecture by Lilian Weng, OpenAI’s scientist

At the heart of the LLM-driven Agent lies a sophisticated architecture comprising several vital components: Planning, Memory, and Tools.

The Planning Module is the brain's command center, enabling the Agent to dissect complex goals into manageable subtasks. The Agent efficiently navigates intricate tasks through subgoal decomposition, enhancing problem-solving prowess. Moreover, the ability to reflect on past actions and adjust strategies equips the Agent with adaptive learning, ensuring continuous improvement in its decision-making processes.

The Memory Module acts as the Agent's knowledge repository. Short-term memory facilitates in-context learning, allowing the model to grasp nuances from specific prompts. In contrast, long-term memory empowers the Agent to retain and recall information over extended periods, a feat achieved through advanced vector databases such as Milvus and Zilliz (fully managed Milvus) and rapid retrieval mechanisms.

Incorporating external resources, the Tool Module enables Agents to access APIs, obtain real-time information, execute code, and tap into proprietary data sources. This integration of external tools supplements the inherent capabilities of LLMs, bridging the gap between raw model outputs and real-world applicability.

How does an AI Agent work?

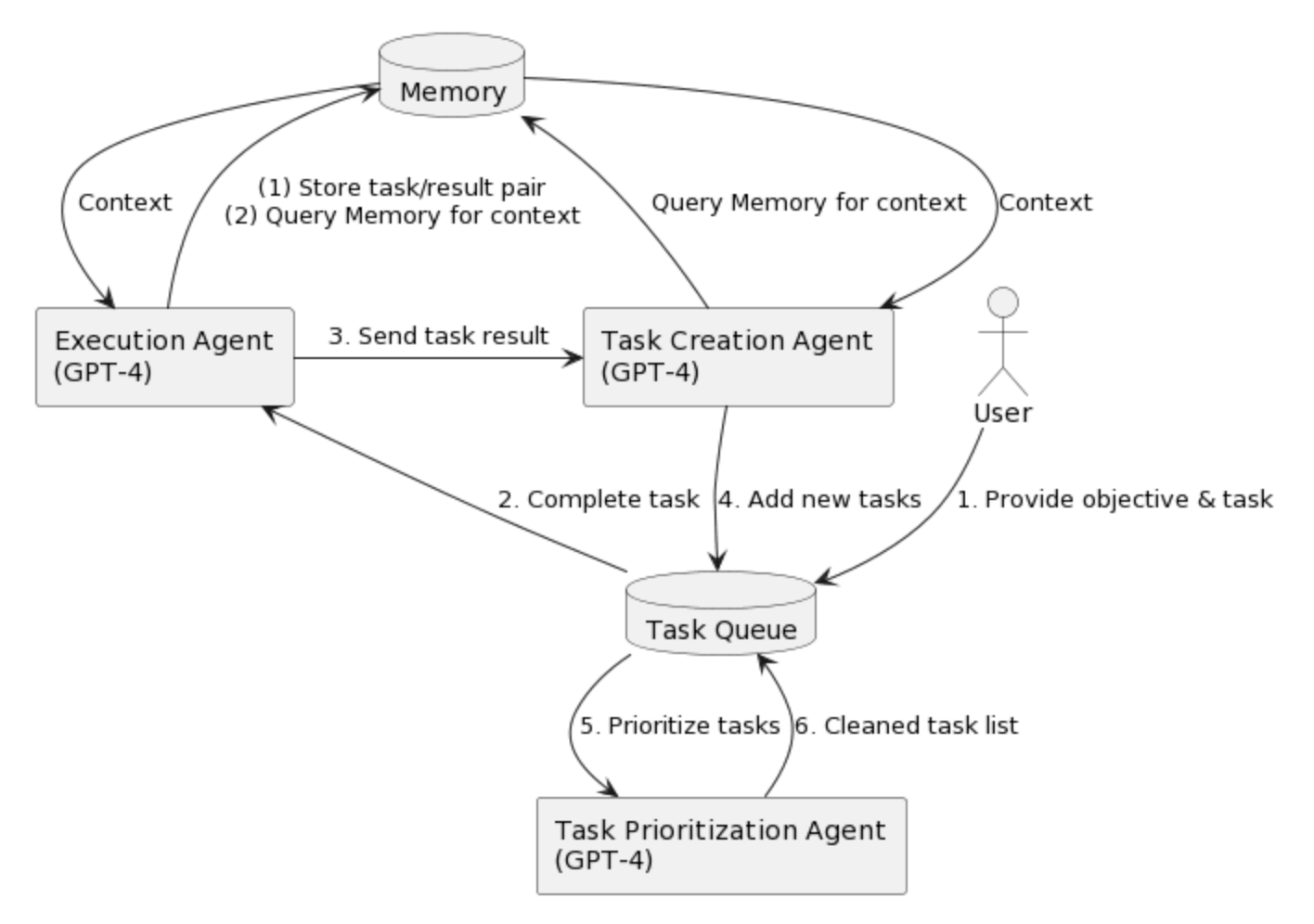

One notable endeavor showcasing the potential of LLM-driven Agents is the AutoGPT project. By harnessing the power of GPT-4, AutoGPT generates tasks, prioritizes them, and executes them with finesse. Employing plugins for internet browsing and external memory, AutoGPT seamlessly integrates information from various sources. This holistic approach, coupled with self-assessment and context-driven decision-making, exemplifies the capabilities of LLM-driven Agents in action.

AutoGPT workflow

AutoGPT workflow

Image source: https://www.lesswrong.com/posts/566kBoPi76t8KAkoD/on-autogpt

Similarly, the Babyagi project follows a comparable trajectory, emphasizing the importance of context-awareness and self-correction. These projects highlight a fundamental distinction: while traditional LLMs serve as tools in a workflow, LLM-driven Agents orchestrate subgoals, providing a comprehensive approach to task execution.

Challenges faced by Agents

Despite their groundbreaking potential, LLM-driven Agents encounter their challenges. Real-world applications have exposed limitations, including the propensity to get stuck in loops, prompt length constraints, and occasional failure in crucial information retrieval. These hurdles underscore the necessity for continuous refinement and innovation in both LLMs and the Agent framework.

Paving the path forward: prospects and possibilities

As we peer into the future, the landscape of LLM-driven Agents is brimming with options. Ongoing research and development are channeling efforts into three key areas of exploration:

LLMs as Agents

LLMs have been trained on massive amounts of text data, possessing an ability to comprehend, generate, and manipulate human language. But LLMs can do more than that. They can also act as “agents ” themselves, engaging with users, providing assistance, offering valuable insights, and enabling a wide range of applications.

However, diverse LLMs-as-agents exhibit varying capabilities in long-term reasoning, decision-making, and prompt handling, according to AgentBench, a multi-dimensional evolving benchmark for assessing LLM-as-agent's reasoning and decision-making abilities in a multi-turn open-ended generation setting.

Projects like ToolLLM are delving into training complex models to understand and utilize APIs, paving the way for enhanced Agent capabilities.

Agent Frameworks

Researchers are actively exploring components outlined by Lilian Weng, enhancing LLM reasoning abilities without altering the core model. These innovative methods and techniques include Chain of Thought (COT), ReAct, and Reflexion, which leverage prompts and feedback mechanisms to augment the Agent's reasoning capabilities. Scientists are also exploring communication and collaboration among multiple Agents, broadening the horizons of Agent interaction.

Agent Applications

Building a general-purpose agent application is challenging because the real world has many uncertainties. However, it is possible to build agent applications tailored to specific scenarios. Projects like MetaGPT and Voyager exemplify the potential of Agents in controlled environments, from software development to autonomous exploration in virtual worlds. These specialized designs mark a significant stride toward realizing fully reliable LLM-driven Agents.

Conclusion

In this transformative moment, LLM Agents signify a paradigm shift from mere automation to genuine intelligence. Their evolution continues to shape the future of AI, promising a world where artificial intelligence seamlessly integrates with human capabilities, revolutionizing how we approach complex tasks. As we venture further into this uncharted territory, the synergy between LLMs and Agents is poised to redefine the fabric of our technological landscape, ushering in an era where the boundaries between human intelligence and artificial ingenuity blur into oblivion.

Keep Reading

Will Amazon S3 Vectors Kill Vector Databases—or Save Them?

AWS S3 Vectors aims for 90% cost savings for vector storage. But will it kill vectordbs like Milvus? A deep dive into costs, limits, and the future of tiered storage.

Our Journey to 35K+ GitHub Stars: The Real Story of Building Milvus from Scratch

Join us in celebrating Milvus, the vector database that hit 35.5K stars on GitHub. Discover our story and how we’re making AI solutions easier for developers.

What is the K-Nearest Neighbors (KNN) Algorithm in Machine Learning?

KNN is a supervised machine learning technique and algorithm for classification and regression. This post is the ultimate guide to KNN.