Exa Builds Entity Search Engine for AI Agents With Zilliz Cloud

<200ms search latency

Exa’s neural search latency reduced from seconds to under 200ms with Zilliz's hybrid search

High reliability

Near-zero operational incidents, freeing engineering time for product work

Zero Downtime for schema changes

New filterable fields and metadata can be added without rebuilding indexes or taking collections offline

We believe AI agents will become a fundamental interface for how people work, learn, and make decisions, and that only happens if those systems can access real-world information with speed, precision, and trust. That’s what we’re building at Exa. Aside from web search, Exa also operates entity search, and Zilliz Cloud has been an important part of that journey, giving us the retrieval performance and operational simplicity we need to scale our entity search product quickly and confidently.

Jeffrey Wang

Search for AI agents sounds like a natural extension of web search, but in practice, it demands a different product standard. Agents do not just need links; they need grounded, current, structured information delivered quickly enough to support real workflows, from voice interactions to deep research tasks.

Exa is building exactly that kind of search engine for AI. Its Search API gives developers access to high-quality, low-latency web search across a wide range of compute-latency, from instant search for voice agents to deeper research with structured outputs and enrichments. Exa serves customers ranging from AI-native startups such as Cursor and Lovable to enterprise firms like AWS, all of which rely on grounded, real-world context for agent-driven workflows.

As Exa expands into entity search for companies, people, and code, it faced a more specialized infrastructure challenge: how to support hybrid retrieval, rich metadata filtering, frequent updates, and millisecond-level latency without diverting engineering focus from the core search engine. That is the specific role Zilliz Cloud (fully managed Milvus) plays in the story below.

| 200ms Low search latency | Hybrid search combining dense vectors, sparse vectors, RRF reranking, and metadata filters in a single API call. Exa Instant reduced neural search latency from seconds to under 200ms |

| High reliability | The managed service has delivered near-zero operational incidents, freeing engineering time for product work |

| Zero Downtime for schema changes | New filterable fields and metadata can be added without rebuilding indexes or taking collections offline |

Below is the script from a conversation with Exa about its product mission, the shift from general web search to entity search, and how Zilliz Cloud fits into that evolution.

1. Exa’s product promise: grounded search for AI agents

We started by asking Exa to describe the product it is building and the customers it serves, because that context explains why retrieval quality and latency are not secondary concerns for the company.

Q: What product or service does Exa provide, and who are your primary customers?

Exa: Exa is building the search engine for AI. We’ve built a search API that enables developers to access high-quality, low-latency web search across their agents. Our API offers search across the compute latency spectrum, from instant (<200ms) searches for voice agents to deep research with structured outputs and enrichments. We specialize in code search, low-latency, and people/company search, with highlights that ensure token efficiency.

We built our search engine from scratch using novel neural architectures, rather than relying on legacy search engines. Building your own search engine requires everything from training embedding models and rerankers to crawling and indexing billions of web pages. This end-to-end ownership enables us to optimize every layer of the stack for quality and speed. In the recent Exa Instant launch, for example, we landed <200ms search latency—a significant improvement that makes neural search viable as a real-time primitive for AI agents. The combination of quality, speed, and customizability is a key differentiator.

Our customers range from AI-native companies like Cursor and Lovable to large enterprises. Any company using agents to drive knowledge work needs grounded context to respond to the real world, so, regardless of company size, we work with teams that prioritize agent-driven workflows.

2. The inflection point: from web search to entity search

That product context also clarifies why Exa’s database decision was not about replacing its core search stack. Vector search was already foundational to the company. The real change came when entity search introduced new constraints.

Q: At what point in your product journey did you realize you needed a vector database?

Exa: Given that our search engine was built on embeddings and vector similarity, vector search has been an integral part of Exa’s tech stack. As we expanded into entity search, we needed to update our vector database infrastructure to accommodate the structured outputs and enrichments we now offered.

Entity search requires rich metadata schemas, frequent data updates, and managed scalability. Our internal database was optimized for these updated constraints, but we wanted to further improve iteration speed across this entity search layer, prompting us to use Zilliz Cloud. Our core web index remains on our internal infrastructure, and Zilliz Cloud was brought in specifically to power this entity search layer.

Q: What challenges or requirements did you face with your previous solution?

Exa: When we started building entity search, the requirements were very different: hybrid search combining dense and sparse vectors, rich and frequently changing metadata schemas, and the operational overhead of managing multiple specialized collections. We were looking for a managed solution that enabled our engineers to rapidly iterate and support fast responses at scale.

Q: What specific use case(s) are you solving with vector search/vector database?

Exa: Today, Zilliz Cloud powers our entity search layer, serving as both the primary index and recency cache across entity collections, while our main web index runs on separate internal infrastructure. Each vertical demands low-latency, filtered search over frequently updated data, where Zilliz’s managed hybrid retrieval and hot-upsert capabilities keep results fresh without rebuilding indexes. These verticals feed directly into our Search API, so speed and recall are business-critical.

3. What Exa needed from a managed vector retrieval layer

Once entity search became a distinct layer, the evaluation was really about fit: could a managed system support Exa’s search quality bar without slowing the team down or forcing architectural compromises?

Q: Which vector databases did you evaluate before choosing Zilliz Cloud? What were the key criteria in your evaluation?

Exa: When we started building entity search, the requirements were very different: hybrid search combining dense and sparse vectors, rich, frequently changing metadata schemas, and the operational overhead of managing multiple specialized collections. We were looking for a managed solution that enabled our engineers to rapidly iterate and support fast responses at scale.

We surveyed all the major vector database options in the space. Our key criteria were:

Hybrid search support: Native ability to combine dense semantic vectors with sparse keyword vectors in a single query, with built-in reranking

Query latency: Consistent fast responses across collections with tens of millions of vectors

Rich metadata filtering: Complex filters on structured fields without degrading search performance

Scalability: Seamless scaling as we add new verticals and data sources

Zilliz Cloud checked every box, and its performance on hybrid search benchmarks was clearly ahead of the field.

Q: How did you first hear about Zilliz Cloud / Milvus?

Exa: We've been aware of Milvus for a long time, as it's one of the most mature open-source vector databases, and as a team that lives and breathes vector search, it's hard to miss. When we started scoping our entity search infrastructure, Zilliz Cloud stood out as the natural managed offering on top of Milvus, with enterprise-grade performance enhancements.

Q: What stood out about Zilliz Cloud during your evaluation? What were the top reasons that led you to choose Zilliz Cloud?

Exa: A few things stood out right away.

Native hybrid search: Zilliz Cloud supports dense and sparse vector search in a single API call, with built-in reranking strategies (RRF, weighted). This was a hard requirement for several competitors, and we didn't support it natively.

Performance at scale - their Cardinal indexing engine delivers consistently fast query times even as our collections grow into hundreds of millions of vectors.

Mature filtering - the ability to combine vector search with complex metadata filters in a single request, without a performance cliff.

In terms of the decisive factors for adoption:

Speed - Zilliz Cloud's query latency met our stringent requirements for production search. Our users expect results in milliseconds, and Zilliz is able to support this.

Hybrid search capabilities - the ability to fuse dense semantic search with sparse BM25 keyword matching and apply Reciprocal Rank Fusion (RRF) reranking in a single API call was important for search quality.

Operational simplicity - as a fully managed service, Zilliz Cloud lets our team focus on building better search experiences and rapidly iterate on improvements to vector-database infrastructure at scale.

4. How the Zilliz and Exa architecture fits together

Q: How does Zilliz Cloud fit into your architecture?

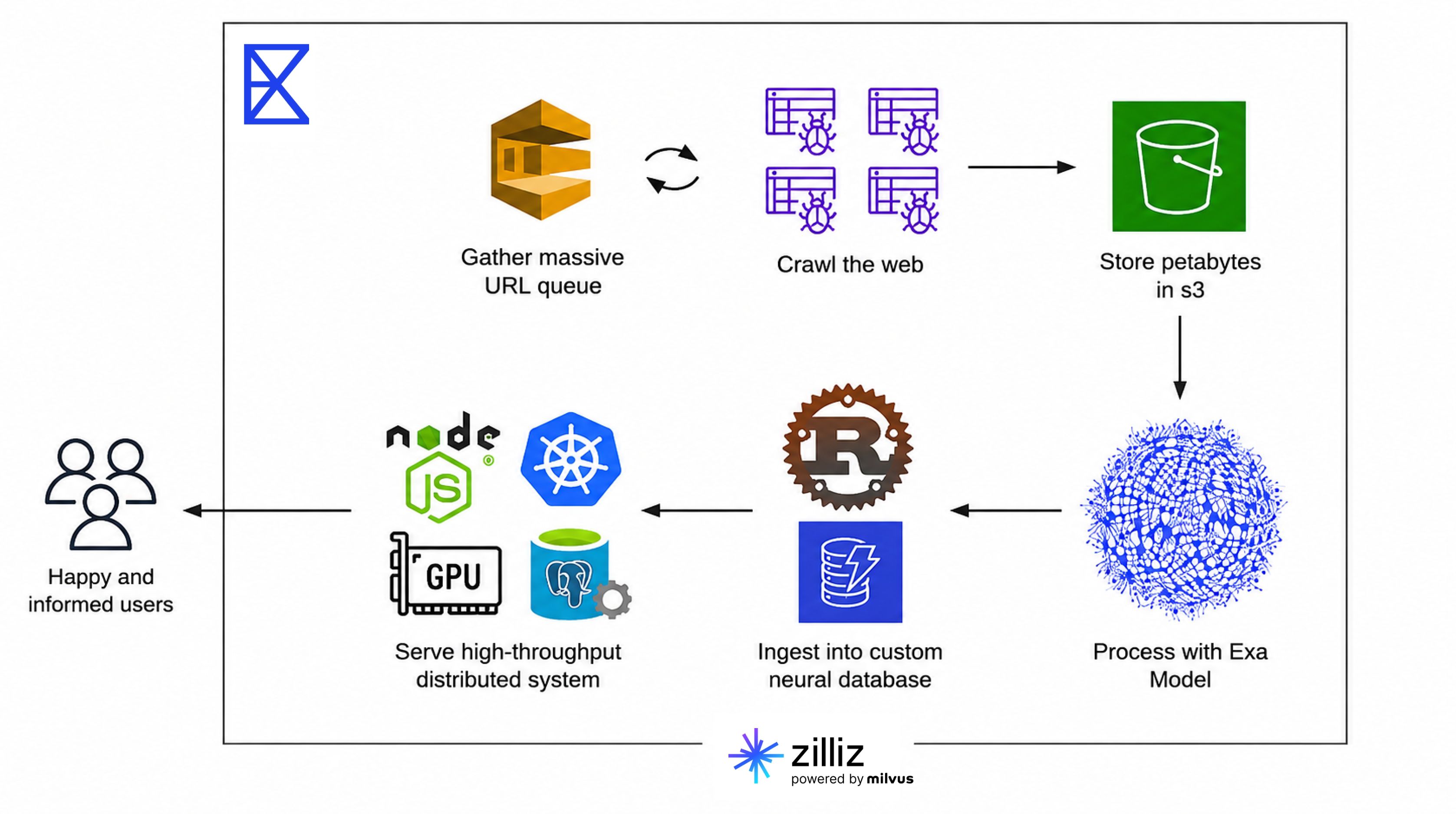

Exa: Our entity search architecture comprises three layers: ingestion, search, and API.

In ingestion, we enrich and embed entity data using our own ML pipelines, then upsert dense and sparse vectors into Zilliz Cloud.

In search, our backend generates embeddings from user queries and dispatches hybrid search requests to Zilliz Cloud, combining semantic and keyword matching with RRF reranking.

In the API layer, results are enriched with structured metadata and served through our Search API and Websets product. Zilliz Cloud sits at the core of retrieval for this workflow: it stores all entity vectors and metadata and handles low-latency search. Our primary web index is built and managed on a separate in-house infrastructure.

Q: What has your team’s experience been like using Zilliz Cloud or Milvus?

Exa: The API is intuitive, the documentation is solid, and the system has been reliable in production. The learning curve was minimal because the Milvus concepts: collections, indexes, search params, map well to how we already think about vector search. The managed nature of Zilliz Cloud means we’ve had very few operational incidents to deal with.

Q: How has the experience been integrating Zilliz Cloud with AWS or other cloud services?

Exa: Seamless. We run our infrastructure primarily on AWS, and Zilliz Cloud fits cleanly into that AWS-native stack. Because it runs on AWS, network latency between our EKS services and Zilliz Cloud is minimal.

5. What changed after adoption

Q: What are the top 3 benefits you’ve seen? Can you share any measurable metrics or improvements?

Exa: The first benefit has been developer velocity: the managed service and clean API meant our team could ship new entity-search verticals rapidly without building or managing additional infrastructure.

Beyond that, schema flexibility and adaptability have mattered a lot as these vertical datasets evolve, and search quality via autoindex has also been valuable in practice.

Q: What features of Zilliz Cloud do you find most valuable?

Exa: Two things stand out most in day-to-day use.

Filtering without performance cliff: Complex metadata filters layered on top of vector search with negligible latency impact.

Fast vertical launches: Managed scaling enables us to ship new search verticals quickly without standing up new infrastructure every time.

Get started with Zilliz Cloud

Zilliz is the creator of Milvus, the world’s most popular open-source vector database, and Zilliz Cloud, the fully managed vector database service built on Milvus. Zilliz Cloud enables organizations to build production-ready AI applications with high-performance vector search, hybrid retrieval, and enterprise-grade security and compliance.

- Get started with Zilliz Cloud for free with $100 in credits upon registration with a business email

- 1. Exa’s product promise: grounded search for AI agents

- 2. The inflection point: from web search to entity search

- 3. What Exa needed from a managed vector retrieval layer

- 4. How the Zilliz and Exa architecture fits together

- 5. What changed after adoption

- Get started with Zilliz Cloud

Content

Industry

AI Infrastructure